After the particle physics degree

Physicist Meghan Anzelc is just as surprised as anyone she wound up with an enjoyable career in the insurance industry.

Figuring out insurance rates and conducting particle physics research are more similar than one might expect. Both depend on interpreting complicated data using high-level mathematical analysis.

For particle physicist Meghan Anzelc, that overlap helped her transition from newly minted PhD to insurance cost analyst.

Today, as an assistant vice president at CNA in Chicago, Anzelc trains her group to use programming language and database tools to predict insurance pricing for small-business clients. She says the job requires her to use many of the skills she learned in her undergraduate and graduate physics work—data manipulation, critical and analytical thinking, and working as a team.

A research trajectory

Anzelc says she didn’t exactly mean to go into particle physics. Interested in just a taste of the science, she signed up for a physics class while enrolled at Loyola University in Chicago. She registered for the class for majors by mistake.

But it turned out that Anzelc enjoyed the material enough to major in physics on purpose.

Over the three summers after she decided to study physics, she discovered her interest in research during internships at Fermi National Accelerator Laboratory and Argonne National Laboratory. “I liked the idea that you could collect data, and do analysis, and learn something about the world that nobody else knew before,” she says.

Anzelc pursued a doctorate in experimental high-energy physics at Northwestern University. She joined Fermilab’s DZero experiment and did her dissertation research on a type of particle decay that could be related to the matter-antimatter imbalance in our universe.

Although she loved research, Anzelc recognized during her doctoral program that she was not interested in a career in academia. She began talking to physicists who had gone into industry.

A friend introduced her to someone who also studied physics but switched to a career as a predictive modeler at Travelers Insurance.

“He could talk to me about how the skills translated, what he found challenging, what he liked about the work,” she says. “It was not a field or an industry that I had any awareness of, but it turned out it was a really good fit.”

To the private sector

After graduating with her doctorate in 2008, Anzelc joined a department at Travelers Insurance that focused on predictive modeling for personal policies such as automobile, home and renters insurance. Her analyses took into account a variety of inputs. For example, for automobile insurance, she considered: Where does the consumer live? What kind of car does the consumer drive? What is the consumer’s credit score? How many years of driving experience does the consumer have?

Her objective was to predict the likelihood that the consumer would get into an accident while insured with the company.

“You’re essentially trying to predict the future using historical information,” Anzelc says.

In 2011, Anzelc left Travelers and joined CNA.

CNA's Vice President of Analytic Data Management Chuck Boucek praises Anzelc’s ability to incorporate information from many different sources. Over time the insurance industry has incorporated increasingly sophisticated models, he says. “Being able to separate what is information and what is noise in all of that data is a key skillset.”

After nearly three years as a predictive modeler at CNA, Anzelc moved to her current managing position. While she doesn’t write the code or query the data anymore, she manages a team that does.

Her main goals, she says, are to help her staff get rid of obstacles and make sure the group is moving forward.

Even in her new position, her past in particle physics is helping her out. She says managing her team members and communicating across ranks and departments require the same skills she needed to work on a collaboration with hundreds of fellow scientists at Fermilab.

Holometer rules out first theory of space-time correlations

The extremely sensitive quantum-spacetime-measuring tool will serve as a template for scientific exploration in the years to come.

Our common sense and the laws of physics assume that space and time are continuous. The Holometer, an experiment based at the US Department of Energy’s Fermi National Accelerator Laboratory, challenges this assumption.

We know that energy on the atomic level, for instance, is not continuous and comes in small, indivisible amounts. The Holometer was built to test if space and time behave the same way.

In a new result 1 Search for space-time correlations from the Planck scale with the Fermilab Holometer released this week after a year of data-taking, the Holometer collaboration has announced that it has ruled out one theory of a pixelated universe to a high level of statistical significance.

If space and time were not continuous, everything would be pixelated, like a digital image.

When you zoom in far enough, you see that a digital image is not smooth, but made up of individual pixels. An image can only store as much data as the number of pixels allows. If the universe were similarly segmented, then there would be a limit to the amount of information space-time could contain.

The main theory the Holometer was built to test was posited by Craig Hogan, a professor of astronomy and physics at the University of Chicago and the head of Fermilab’s Center for Particle Astrophysics. The Holometer did not detect the amount of correlated holographic noise—quantum jitter—that this particular model of space-time predicts.

But as Hogan emphasizes, it's just one theory, and with the Holometer, this team of scientists has proven that space-time can be probed at an unprecedented level.

“This is just the beginning of the story,” Hogan says. “We’ve developed a new way of studying space and time that we didn’t have before. We weren’t even sure we could attain the sensitivity we did.”

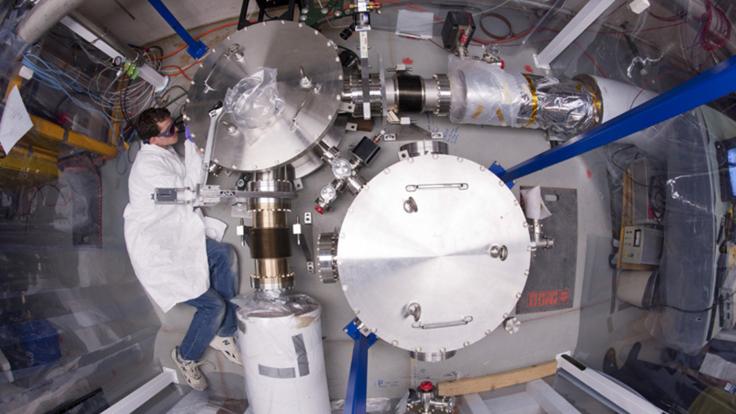

The Holometer isn’t much to look at. It’s a small array of lasers and mirrors with a trailer for a control room. But the low-tech look of the device belies the fact that it is an unprecedentedly sensitive instrument, able to measure movements that last only a millionth of a second and distances that are a billionth of a billionth of a meter—a thousand times smaller than a single proton.

The Holometer uses a pair of laser interferometers placed close to one another, each sending a 1-kilowatt beam of light through a beam splitter and down two perpendicular arms, 40 meters each. The light is then reflected back into the beam splitter where the two beams recombine.

If no motion has occurred, then the recombined beam will be the same as the original beam. But if fluctuations in brightness are observed, researchers will then analyze these fluctuations to see if the splitter is moving in a certain way, being carried along on a jitter of space itself.

According to Fermilab’s Aaron Chou, project manager of the Holometer experiment, the collaboration looked to the work done to design other, similar instruments, such as the one used in the Laser Interferometer Gravitational-Wave Observatory experiment. Chou says that once the Holometer team realized that this technology could be used to study the quantum fluctuation they were after, the work of other collaborations using laser interferometers (including LIGO) was invaluable.

“No one has ever applied this technology in this way before,” Chou says. “A small team, mostly students, built an instrument nearly as sensitive as LIGO’s to look for something completely different.”

The challenge for researchers using the Holometer is to eliminate all other sources of movement until they are left with a fluctuation they cannot explain. According to Fermilab’s Chris Stoughton, a scientist on the Holometer experiment, the process of taking data was one of constantly adjusting the machine to remove more noise.

“You would run the machine for a while, take data, and then try to get rid of all the fluctuation you could see before running it again,” he says. “The origin of the phenomenon we’re looking for is a billion billion times smaller than a proton, and the Holometer is extremely sensitive, so it picks up a lot of outside sources, such as wind and traffic.”

If the Holometer were to see holographic noise that researchers could not eliminate, it might be detecting noise that is intrinsic to space-time, which may mean that information in our universe could actually be encoded in tiny packets in two dimensions.

The fact that the Holometer ruled out his theory to a high level of significance proves that it can probe time and space at previously unimagined scales, Hogan says. It also proves that if this quantum jitter exists, it is either much smaller than the Holometer can detect, or is moving in directions the current instrument is not configured to observe.

So what’s next? Hogan says the Holometer team will continue to take and analyze data, and will publish more general and more sensitive studies of holographic noise. The collaboration already released a result related to the study of gravitational waves.

And Hogan is already putting forth a new model of holographic structure that would require similar instruments of the same sensitivity, but different configurations sensitive to the rotation of space. The Holometer, he says, will serve as a template for an entirely new field of experimental science.

“It’s new technology, and the Holometer is just the first example of a new way of studying exotic correlations,” Hogan says. “It is just the first glimpse through a newly invented microscope.”

The Holometer experiment is supported by funding from the DOE Office of Science. The Holometer collaboration includes scientists from Fermilab, the University of Chicago, the Massachusetts Institute of Technology and the University of Michigan.

What could dark matter be?

Scientists don’t yet know what dark matter is made of, but they are full of ideas.

Although nearly a century has passed since an astronomer first used the term “dark matter” in the 1930s, the elusive substance still defies explanation. Physicists can measure its effects on the movements of galaxies and other celestial objects, but what it’s made of remains a mystery.

In order to solve it, physicists have come up with myriad possibilities, plus a unique way to find each one. Some ideas for dark matter particles arose out of attempts to solve other problems in physics. Others are pushing the boundaries of what we understand dark matter to be.

“You don’t know which experiment is going to ultimately show it,” says Neal Weiner, a New York University physics professor. “And if you don’t think of the right experiment, then you might not find it. It’s not just going to hit you in the face, because it’s dark matter.”

WIMPs

The term WIMP encompasses many dark matter particles, some of which are discussed in this list.

Short for weakly interacting massive particles, WIMPs would have about 1 to 1000 times the mass of a proton and would interact with one another only through the weak force, the force responsible for radioactive decay.

If dark matter were a pop star, WIMPs would be Beyoncé. “WIMPs are the canonical candidate,” says Manoj Kaplinghat, a professor of physics and astronomy at the University of California, Irvine.

But a recent surge in data has cast new doubt on their existence. Despite the fact that scientists are hunting for them in experiments in space and on Earth, including ones at the Large Hadron Collider, WIMPs have yet to show themselves, making the restrictions on their mass, interaction strength and other properties ever tighter.

If WIMPs do fail to appear, the upshot will be a push for creative new solutions to the dark matter mystery—plus a chance to finally cross something off the list.

“If we don’t see it, it will at least end up closing the chapter on a really dominant paradigm that’s been the guide in the field for many, many years,” says Mariangela Lisanti, a theoretical particle physicist at Princeton University.

Sterile neutrinos

Neutrinos are almost massless particles that shape-shift from one type to another and can stream right through an entire planet without hitting a thing. As strange as they are, they may have an even odder counterpart known as sterile neutrinos.

These most elusive particles would be so unresponsive to their surroundings that it would take the entire age of the universe for just one to interact with another bit of matter.

If sterile neutrinos are the stuff of dark matter, their reluctance to interact might seem to spell doom for physicists hoping to detect them. But in a poetic twist, it’s possible that they decay into something we can find quite easily: photons, or particles of light.

“Photons, we’re pretty good at,” says Stefano Profumo, a physics professor at the University of California, Santa Cruz.

Last year, physicists using space-based telescopes discovered a steady signal with the energy predicted for decaying sterile neutrinos streaming from the centers of galaxy clusters. But the signal could originate from a different source, such as potassium ions. (Profumo proposed this idea in a paper provocatively titled “Dark matter searches going bananas.”) A new Japanese telescope known as ASTRO-H has much better energy resolution and may be able to put an end to the debate.

Neutralinos

The canonical example of a WIMP, the neutralino, arises out of the theory of Supersymmetry. Supersymmetry posits that every known particle has a “super” partner and helps to fill some holes in the Standard Model, but its particles have stubbornly eluded observation.

Some of them, like the partners of the photon and the Z boson, have properties akin to dark matter. Dark matter could be a mix of these supersymmetric particles, and the one we’d be most likely to observe is known as the neutralino.

Discovering a neutralino would help solve two massive physics problems—it would tell us the identity of dark matter and would give us proof of the existence of Supersymmetry. But it would also leave physicists with the conundrum of all those other missing supersymmetric particles.

“If dark matter is a neutralino, it’s essentially telling us there’s a whole host of other new stuff that’s out there that’s just waiting to be discovered,” Lisanti says. “It opens up a floodgate of really, really interesting and very exciting work to be done.”

Asymmetric dark matter

In the beginning of the universe, matter and antimatter collided furiously, annihilating each other on contact until, somehow, only matter was left. But there’s nothing in the Standard Model of particle physics that says this must be so. Antimatter and matter should have existed in equal amounts, wiping each other out and leaving an empty universe.

That’s clearly not the case, and physicists don’t yet know why. It’s possible the same principle applies to dark matter. In a twist on the standard neutralino theory, which includes the property that neutralinos are their own antiparticle, an idea known as asymmetric dark matter proposes that anti-dark matter particles were wiped out by their dark matter counterparts, leaving behind the dark matter we see today. Finding asymmetric dark matter could help answer not only the question of what dark matter is, but also why we’re here to look for it.

Axions

As the search for WIMPs faces challenges, a particle known as the axion is generating new excitement.

The axion itself is not new. Physicists first imagined its existence in the early 1980s, shortly after physicists Helen Quinn and Roberto Peccei published a landmark paper that helped to solve a problem with the strong nuclear force. While it’s been simmering in the background as a dark matter candidate for decades, experimentalists haven’t been able to search for it—until now.

“We’re just recently getting to the stage of having experiments that are able to probe the most interesting regions of axion parameter space,” says Stanford University physics professor Risa Wechsler.

The University of Washington’s Axion Dark Matter Experiment (ADMX) is on the hunt for axions, using a strong magnetic field to try to turn them into detectable photons. At the same time, theorists are beginning to imagine new types of axions, along with novel ways to search for them.

“There’s been a renaissance in axion theory, leading to a lot more excitement in axion experiments,” says UCI theoretical physicist Jonathan Feng.

Mirror world dark matter

Just like strange objects and creatures inhabited the world beyond Alice’s looking glass, dark matter might exist in an entirely separate world full of its own versions of all the elementary particles. These dark protons and neutrons would never interact with us, save through gravity, exerting a pull on matter in our world without leaving any other trace. “The only reason we know there’s something out there called dark matter is because of gravity,” Feng says. “This embodies that very beautifully.”

Beautiful as it may be, the theory leaves little hope for ever detecting dark matter. But there are hints that dark photons might be able to morph into regular photons, similar to the way neutrinos oscillate among flavors. This has spawned active research into understanding and finding these mysterious particles.

Extra dimensional dark matter

If dark matter doesn’t exist in another world entirely, it might live in a fourth spatial dimension unseen by humans and our experiments. Such a dimension would be too small for us to observe a particle’s movements within it. Instead, we would see multiple particles with the same charge but different masses, an idea proposed by Theodor Kaluza and Oskar Klein in the 1920s. One of these particles could be the dark matter particle, a much more recent concept known as Kaluza-Klein dark matter. These particles wouldn’t shine or reflect any light, explaining why dark matter can’t be seen by anyone in our three dimensions.

Confirming that dark matter exists in another dimension could also be seen as support for string theory, which requires extra dimensions to work.

“You can go out there and map out the extra-dimensional world just like 500 years ago people mapped out the continents,” Feng says.

SIMPs

Though physicists have never detected dark matter, they have a pretty good idea of how much of it exists, based on observations of galaxies. But observations of the inner regions of galaxies don't match up with some dark matter simulations, a puzzle physicists and astronomers are still working to solve.

Those simulations often assume that dark matter doesn’t interact with itself, but there’s no reason to believe that has to be the case. That realization has led to the concept of strongly interacting massive particles, or SIMPs, the latest newcomer to the crowded field of dark matter candidates. Simulations run with SIMPs seem to eliminate the discrepancy in other models, Feng says, and could even explain the strange photon signal emanating from galaxy clusters, rather than sterile neutrinos.

Composite dark matter

Dark matter could be none of these candidates—or it could be more than one.

“There is no reason for dark matter to be just one particle, not a single one,” Kaplinghat says. “We only assume it is for simplicity.”

After all, visible matter is made up of a swarm of particles, each with their own properties and behaviors, each able to combine with others in countless ways. Why should dark matter be any different?

Dark matter could have its own equivalents of quarks and gluons interacting to form dark baryons and other particles. There could be dark atoms, made of several particles linked together.

Whatever the case, dark matter is likely to keep physicists probing the depths of the universe for decades, revealing new mysteries even as old ones are solved.

Revamped LHC goes heavy metal

Physicists will collide lead ions to replicate and study the embryonic universe.

“In the beginning there was nothing, which exploded.”

~ Terry Pratchett, author

For the next three weeks physicists at the Large Hadron Collider will cook up the oldest form of matter in the universe by switching their subatomic fodder from protons to lead ions.

Lead ions consist of 82 protons and 126 neutrons clumped into tight atomic nuclei. When smashed together at extremely high energies, lead ions transform into the universe’s most perfect super-fluid: the quark gluon plasma. Quark gluon plasma is the oldest form of matter in the universe; it is thought to have formed within microseconds of the big bang.

“The LHC can bring us back to that time,” says Rene Bellwied, a professor of physics at the University of Houston and a researcher on the ALICE experiment. “We can produce a tiny sample of the nascent universe and study how it cooled and coalesced to make everything we see today.”

Scientists first observed this prehistoric plasma after colliding gold ions in the Relativistic Heavy Ion Collider, a nuclear physics research facility located at the US Department of Energy’s Brookhaven National Laboratory.

“We expected to create matter that would behave like a gas, but it actually has properties that make it more like a liquid,” says Brookhaven physicist Peter Steinberg, who works on both RHIC and the ATLAS heavy ion program at the LHC. “And it’s not just any liquid; it’s a near perfect liquid, with a very uniform flow and almost no internal resistance."

The LHC is famous for accelerating and colliding protons at the highest energies on Earth, but once a year physicists tweak its magnets and optimize its parameters for lead-lead or lead-proton collisions.

The lead ions are accelerated until each proton and neutron inside the nucleus has about 2.51 trillion electronvolts of energy. This might seem small compared to the 6.5 TeV protons that zoomed around the LHC ring during the summer. But because lead ions are so massive, they get a lot more bang for their buck.

“If protons were bowling balls, lead ions would be wrecking balls,” says Peter Jacobs, a scientist at Lawrence Berkeley National Laboratory working on the ALICE experiment. “When we collide them inside the LHC, the total energy generated is huge; reaching temperatures around 100,000 times hotter than the center of the sun. This is a state of matter we cannot make by just colliding two protons.”

Compared to the last round of LHC lead-lead collisions at the end of Run I, these collisions are nearly twice as energetic. New additions to the ALICE detector will also give scientists a more encompassing picture of the nascent universe’s behavior and personality.

“The system will be hotter, so the quark gluon plasma will live longer and expand more,” Bellwied says. “This increases our chances of producing new types of matter and will enable us to study the plasma’s properties more in depth.”

The Department of Energy, Office of Science, and the National Science Foundation support this research and sponsor the US-led upgrades the LHC detectors.

Bellwied and his team are particularly interested in studying a heavy and metastable form of matter called strange matter. Strange matter is made up of clumps of quarks, much like the original colliding lead ions, but it contains at least one particularly heavy quark, called the strange quark.

“There are six quarks that exist in nature, but everything that is stable is made only out of the two lightest ones,” he says. “We want to see what other types of matter are possible. We know that matter containing strange quarks can exist, but how strange can we make it?”

Examining the composition, mass and stability of ‘strange’ matter could help illuminate how the early universe evolved and what role (if any) heavy quarks and metastable forms of matter played during its development.

Charge-parity violation

Matter and antimatter behave differently. Scientists hope that investigating how might someday explain why we exist.

One of the great puzzles for scientists is why there is more matter than antimatter in the universe—the reason we exist.

It turns out that the answer to this question is deeply connected to the breaking of fundamental conservation laws of particle physics. The discovery of these violations has a rich history, dating back to 1956.

Parity violation

It all began with a study led by scientist Chien-Shiung Wu of Columbia University. She and her team were studying the decay of cobalt-60, an unstable isotope of the element cobalt. Cobalt-60 decays into another isotope, nickel-60, and in the process, it emits an electron and an electron antineutrino. The nickel-60 isotope then decays into a pair of photons.

The conservation law being tested was parity conservation, which states that the laws of physics shouldn’t change when all the signs of a particle’s spatial coordinates are flipped. The experiment observed the decay of cobalt-60 in two arrangements that mirrored one another.

The release of photons in the decay is an electromagnetic process, and electromagnetic processes had been shown to conserve parity. But the release of the electron and electron antineutrino is a radioactive decay process, mediated by the weak force. Such processes had not been tested in this way before.

Parity conservation dictated that, in this experiment, the electrons should be emitted in the same direction and in the same proportion as the photons.

But Wu and her team found just the opposite to be true. This meant that nature was playing favorites. Parity, or P symmetry, had been violated.

Two theorists, Tsung Dao Lee and Chen Ning Yang, who had suggested testing parity in this way, shared the 1957 Nobel Prize in physics for the discovery.

Charge-parity violation

Many scientists were flummoxed by the discovery of parity violation, says Ulrich Nierste, a theoretical physicist at the Karlsruhe Institute of Technology in Germany.

“Physicists then began to think that they may have been looking at the wrong symmetry all along,” he says.

The finding had ripple effects. For one, scientists learned that another symmetry they thought was fundamental—charge conjugation, or C symmetry—must be violated as well.

Charge conjugation is a symmetry between particles and their antiparticles. When applied to particles with a property called spin, like quarks and electrons, the C and P transformations are in conflict with each other.

“Physicists then began to think that they may have been looking at the wrong symmetry all along.”

This means that neither can be a good symmetry if one of them is violated. But, scientists thought, the combination of the two—called CP symmetry—might still be conserved. If that were the case, there would at least be a symmetry between the behavior of particles and their oppositely charged antimatter partners.

Alas, this also was not meant to be. In 1964, a research group led by James Cronin and Val Fitch discovered in an experiment at Brookhaven National Laboratory that CP is violated, too.

The team studied the decay of neutral kaons into pions; both are composite particles made of a quark and antiquark. Neutral kaons come in two versions that have different lifetimes: a short-lived one that primarily decays into two pions and a long-lived relative that prefers to leave three pions behind.

However, Cronin, Fitch and their colleagues found that, rarely, long-lived kaons also decayed into two instead of three pions, which required CP symmetry to be broken.

The discovery of CP violation was recognized with the 1980 Nobel Prize in physics. And it led to even more discoveries.

It prompted theorists Makoto Kobayashi and Toshihide Maskawa to predict in 1973 the existence of a new generation of elementary particles. At the time, only two generations were known. Within a few years, experiments at SLAC National Accelerator Laboaratory found the tau particle—the third generation of a group including electrons and muons. Scientists at Fermi National Accelerator Laboratory later discovered a third generation of quarks—bottom and top quarks.

Digging further into CP violation

In the late 1990s, scientists at Fermilab and European laboratory CERN found more evidence of CP violation in decays of neutral kaons. And starting in 1999, the BaBar experiment at SLAC and the Belle experiment at KEK in Japan began to look into CP violation in decays of composite particles called B mesons

By analyzing dozens of different types of B meson decays, scientists on BaBar and Belle revealed small differences in the way B mesons and their antiparticles fall apart. The results matched the predictions of Kobayashi and Maskawa, and in 2008 their work was recognized with one half of the physics Nobel Prize.

“But checking if the experimental data agree with the theory was only one of our goals,” says BaBar spokesperson Michael Roney of the University of Victoria in Canada. “We also wanted to find out if there is more to CP violation than we know.”

This is because these experiments are seeking to answer a big question: Why are we here?

When the universe formed in the big bang 14 billion years ago, it should have generated matter and antimatter in equal amounts. If nature treated both exactly the same way, matter and antimatter would have annihilated each other, leaving nothing behind but energy.

And yet, our matter-dominated universe exists.

CP violation is essential to explain this imbalance. However, the amount of CP violation observed in particle physics experiments so far is a million to a billion times too small.

Current and future studies

Recently, BaBar and Belle combined their data treasure troves in a joint analysis1First observation of CP violation in B0->D(*)CP h0 decays by a combined time-dependent analysis of BaBar and Belle data. It revealed for the first time CP violation in a class of B meson decays that each experiment couldn't have analyzed alone due to limited statistics.

This and all other studies to date are in full agreement with the standard theory. But researchers are far from giving up hope on finding unexpected behaviors in processes governed by CP violation.

The future Belle II, currently under construction at KEK, will produce B mesons at a much higher rate than its predecessor, enabling future CP violation studies with higher precision.

And the LHCb experiment at CERN’s Large Hadron Collider is continuing studies of B mesons, including heavier ones that were only rarely produced in the BaBar and Belle experiments. The experiment will be upgraded in the future to collect data at 10 times the current rate.

To date, CP violation has been observed only in particles like these ones made of quarks.

“We know that the types of CP violation already seen using some quark decays cannot explain matter’s dominance in the universe,” says LHCb collaboration member Sheldon Stone of Syracuse University. “So the question is: Where else could we possibly find CP violation?”

One place for it to hide could be in the decay of the Higgs boson. Another place to look for CP violation is in the behavior of elementary leptons—electrons, muons, taus and their associated neutrinos. It could also appear in different kinds of quark decays.

“To explain the evolution of the universe, we would need a large amount of extra CP violation,” Nierste says. “It’s possible that this mechanism involves unknown particles so heavy that we’ll never be able to create them on Earth.”

Such heavyweights would have been produced last in the very early universe and could be related to the lack of antimatter in the universe today. Researchers search for CP violation in much lighter neutrinos, which could give us a glimpse of a possible large violation at high masses.

The search continues.

Physicists get a supercomputing boost

Scientists have made the first-ever calculation of a prediction involving the decay of certain matter and antimatter particles.

Sometimes the tiniest difference between a prediction and a result can tell scientists that a theory isn’t quite right and it’s time to head back to the drawing board.

One way to find such a difference is to refine your experimental methods to get more and more precise results. Another way to do it: refine the prediction instead. Scientists recently showed the value of taking this tack using some of the world’s most powerful supercomputers.

An international team of scientists has made the first ever calculation of an effect called direct charge-parity violation—or CP violation—a difference between the decay of matter particles and of their antimatter counterparts.

They made their calculation using the Blue Gene/Q supercomputers at the RIKEN BNL Research Center at Brookhaven National Laboratory, at the Argonne Leadership Class Computing Facility at Argonne National Laboratory, and at the DiRAC facility at the University of Edinburgh.

Their work took more than 200 million supercomputer core processing hours—roughly the equivalent of 2000 years on a standard laptop. The project was funded by the US Department of Energy’s Office of Science, the RIKEN Laboratory of Japan and the UK Science and Technology Facilities Council.

The scientists compared their calculated prediction to experimental results established in 2000 at European physics laboratory CERN and Fermi National Accelerator Laboratory.

Scientists first discovered evidence of indirect CP violation in a Nobel-Prize-winning experiment at Brookhaven Lab in 1964. It took them another 36 years to find evidence of direct CP violation.

“This so-called ‘direct’ symmetry violation is a tiny effect, showing up in just a few particle decays in a million,” says Brookhaven physicist Taku Izubuchi, a member of the team that performed the calculation.

Physicists look to CP violation to explain the preponderance of matter in the universe. After the big bang, there should have been equal amounts of matter and antimatter, which should have annihilated with one another. A difference between the behavior of matter and antimatter could explain why that didn’t happen.

Scientists have found evidence of some CP violation—but not enough to explain why our matter-dominated universe exists.

The supercomputer calculations, published in Physical Review Letters 1 Standard Model prediction for direct CP violation in K→ππ decay, so far show no difference between prediction and experimental result in direct CP violation.

But scientists expect to double the accuracy of their calculated prediction within two years, says Peter Boyle of the University of Edinburgh. “This leaves open the possibility that evidence for new phenomena… may yet be uncovered.”

Shrinking the accelerator

Scientists plan to use a newly awarded grant to develop a shoebox-sized particle accelerator in five years.

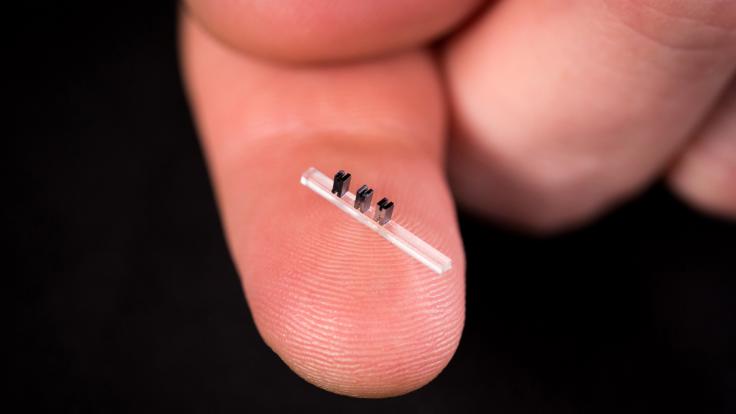

The Gordon and Betty Moore Foundation has awarded $13.5 million to Stanford University for an international effort, including key contributions from the Department of Energy’s SLAC National Accelerator Laboratory, to build a working particle accelerator the size of a shoebox. It’s based on an innovative technology known as “accelerator on a chip.”

This novel technique, which uses laser light to propel electrons through a series of artfully crafted glass chips, has the potential to revolutionize science, medicine and other fields by dramatically shrinking the size and cost of particle accelerators.

“Can we do for particle accelerators what the microchip industry did for computers?” says SLAC physicist Joel England, an investigator with the 5-year project. “Making them much smaller and cheaper would democratize accelerators, potentially making them available to millions of people. We can’t even imagine the creative applications they would find for this technology.”

Robert L. Byer, a Stanford professor of applied physics and co-principal investigator for the project who has been working on the idea for 40 years, says, “Based on our proposed revolutionary design, this prototype could set the stage for a new generation of ‘tabletop’ accelerators, with unanticipated discoveries in biology and materials science and potential applications in security scanning, medical therapy and X-ray imaging.”

The chip that launched an international quest

The international effort to make a working prototype of the little accelerator was inspired by experiments led by scientists at SLAC and Stanford and, independently, at Friedrich-Alexander University Erlangen-Nuremberg (FAU) in Germany. Both teams demonstrated the potential for accelerating particles with lasers in papers published on the same day in 2013.

In the SLAC/Stanford experiments, published in Nature, electrons were first accelerated to nearly light speed in a SLAC accelerator test facility. At this point they were going about as fast as they could go, and any additional acceleration would boost their energy, not their speed.

The speeding electrons then entered a chip made of silica and traveled through a microscopic tunnel that had tiny ridges carved into its walls. Laser light shining on the chip interacted with those ridges and produced an electrical field that boosted the energy of the passing electrons.

In the experiments, the chip achieved an acceleration gradient, or energy boost over a given distance, roughly 10 times higher than the existing 2-mile-long SLAC linear accelerator can provide. At full potential, this means the SLAC accelerator could be replaced with a series of accelerator chips 100 meters long, roughly the length of a football field.

In a parallel approach, experiments led by Peter Hommelhoff of FAU and published in Physical Review Letters demonstrated that a laser could also be used to accelerate lower-energy electrons that had not first been boosted to nearly light speed. Both results taken together open the door to a compact particle accelerator.

A tough, high-payoff challenge

For the past 75 years, particle accelerators have been an essential tool for physics, chemistry, biology and medicine, leading to multiple Nobel prize-winning discoveries. They are used to collide particles at high energies for studies of fundamental physics, and also to generate intense X-ray beams for a wide range of experiments in materials, biology, chemistry and other fields. This new technology could lead to progress in these fields by reducing the cost and size of high-energy accelerators.

The challenges of building the prototype accelerator are substantial. Demonstrating that a single chip works was an important step; now scientists must work out the optimal chip design and the best way to generate and steer electrons, distribute laser power among multiple chips and make electron beams that are 1000 times smaller in diameter to go through the microscopic chip tunnels, among a host of other technical details.

“The chip is the most crucial ingredient, but a working accelerator is way more than just this component,” says Hommelhoff, a professor of physics and co-principal investigator of the project. “We know what the main challenges will be, and we don’t know how to solve them yet. But as scientists we thrive on this type of challenge. It requires a very diverse set of expertise, and we have brought a great crowd of people together to tackle it.”

The Stanford-led collaboration includes world-renowned experts in accelerator physics, laser physics, nanophotonics and nanofabrication. SLAC and two other national laboratories, Deutsches Elektronen-Synchrotron (DESY) in Germany and Paul Scherrer Institute in Switzerland, will contribute expertise and make their facilities available for experiments. In addition to FAU, five other universities are involved in the effort: University of California, Los Angeles, Purdue University, University of Hamburg, the Swiss Federal Institute of Technology in Lausanne (EPFL) and Technical University of Darmstadt.

“The accelerator-on-a-chip project has terrific scientists pursuing a great idea. We’ll know they’ve succeeded when they advance from the proof of concept to a working prototype,” says Robert Kirshner, chief program officer of science at the Gordon and Betty Moore Foundation. “This research is risky, but the Moore Foundation is not afraid of risk when a novel approach holds the potential for a big advance in science. Making things small to produce immense returns is what Gordon Moore did for microelectronics.”

Cleanroom is a verb

It’s not easy being clean.

Although they might be invisible to the naked eye, contaminants less than a micron in size can ruin very sensitive experiments in particle physics.

Flakes of skin, insect parts and other air-surfing particles—collectively known as dust—force scientists to construct or conduct certain experiments in cleanrooms, special places with regulated contaminant levels. There, scientists use a variety of tactics to keep their experiments dust-free.

The enemy within

Cleanrooms are classified by how many particles are found in a cubic foot of space. The fewer the particles, the cleaner the cleanroom.

To prevent contaminating particles from getting in, everything that enters cleanrooms must be covered or cleaned, including the people. Scratch that: especially the people.

“People are the dirtiest things in a cleanroom,” says Lisa Kaufman, assistant professor of nuclear physics at Indiana University. “We have to protect experiment detectors from ourselves.”

Humans are walking landfills as far as a cleanroom is concerned. We shed hair and skin incessantly, both of which form dust. Our body and clothes also carry dust and dirt. Even our fingerprints can be adversaries.

“Your fingers are terrible. They’re oily and filled with contaminants,” says Aaron Roodman, professor of particle physics and astrophysics at SLAC National Accelerator Laboratory.

In an experiment detector susceptible to radioactivity, the potassium in one fingerprint can create a flurry of false signals, which could cloud the real signals the experiment seeks.

As a cleanroom’s greatest enemy, humans must cover up completely to go inside: A zip-up coverall, known as a bunny suit, sequesters shed skin. (Although its name alludes otherwise, the bunny suit lacks floppy ears and a fluffy tail.) Shower-cap-like headgear holds in hair. Booties cover soiled shoes. Gloves are a must-have. In particularly clean cleanrooms, or for scientists sporting burly beards, facemasks may be necessary as well.

These items keep the number of particles brought into a cleanroom at a minimum.

“In a normal place, if you have some surface that’s unattended, that you don’t dust, after a few days you’ll see lots and lots of stuff,” Roodman says. “In a cleanroom, you don’t see anything.”

Getting to nothing, however, can take a lot more work than just covering up.

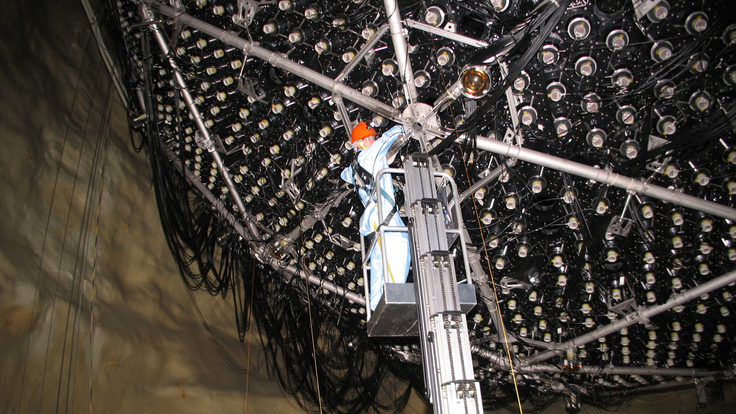

Washing up at SNOLAB

“This one undergrad who worked here put it, ‘Cleanroom is a verb, not a noun.’ Because the way you get a cleanroom clean is by constantly cleaning,” says research scientist Chris Jillings.

Jillings works at SNOLAB, an underground laboratory studying neutrinos and dark matter. The lab is housed in an active Canadian mine.

It seems an unlikely place for a cleanroom. And yet the entire 50,000-square-foot lab is considered a class-2000 cleanroom, meaning there are fewer than 2000 particles per cubic foot. Your average indoor space may have as many as 1 million particles per cubic foot.

SNOLAB’s biggest concern is mine dust, because it contains uranium and thorium. These radioactive elements can upset sensitive detectors in SNOLAB experiments, such as DEAP-3600, which is searching for the faint whisper of dark matter. Uranium and thorium could leave signals in its detector that look like evidence of dark matter.

“People are the dirtiest things in a cleanroom... We have to protect experiment detectors from ourselves.”

Most workplaces can’t guarantee that all of their employees shower before work, but SNOLAB can. Everyone entering SNOLAB must shower on their way in and re-dress in a set of freshly laundered clothes.

“We’ve sort of made it normal. It doesn’t seem strange to us,” says Jillings, who works on DEAP-3600. “It saves you a few minutes in the morning because you don’t have to shower at home.” More importantly, showering removes mine dust.

SNOLAB also regularly wipes down every surface and constantly filters the air.

Clearing the air for EXO

Endless air filtration is a mainstay of all modern cleanrooms. Willis Whitfield, former physicist at Sandia National Laboratories, invented the modern cleanroom in 1962 by introducing this continuous filtered airflow to flush out particles.

The filtered, pressurized, dehumidified air can make those who work in cleanrooms thirsty and contact-wearers uncomfortable.

“You get used to it over time,” says Kaufman, who works in a cleanroom for the SLAC-headed Enriched Xenon Observatory experiment, EXO-200.

EXO-200 is another testament to particle physicists’ affinity for mines. The experiment hunts for extremely rare double beta decay events at WIPP, a salt mine in New Mexico, in its own class-1000 cleanroom—even cleaner than SNOLAB.

As with SNOLAB experiments, anything emitting even the faintest amount of radiation is foe to EXO-200. Though those entering EXO-200’s cleanroom don’t have to shower, they do have to wash their arms, ears, face, neck and hands before covering up.

Ditching the dust for LSST

SLAC laboratory recently finished building another class-1000 cleanroom, specifically for assembly of the Large Synoptic Survey Telescope. LSST, an astronomical camera, will take over four years to build and will be the largest camera ever.

While SNOLAB and the EXO-200 cleanroom are mostly concerned with the radioactivity in particles containing uranium, thorium or potassium, LSST is wary of even the physical presence of particles.

“If you’ve got parts that have to fit together really precisely, even a little dust particle can cause problems,” Roodman says. Dust can block or absorb light in various parts of the LSST camera.

LSST’s parts are also vulnerable to static electricity. Built-up static electricity can wreck camera parts in a sudden zap known as an electrostatic discharge event.

To reduce the chance of a zap, the LSST cleanroom features static-dissipating floors and all of its benches and tables are grounded. Once again, humans prove to be the worst offenders.

“Most electrostatic discharge events are generated from humans,” says Jeff Tice, LSST cleanroom manager. “Your body is a capacitor and able to store a charge.”

Scientists assembling the camera will wear static-reducing garments as well as antistatic wrist straps that ground them to the floor and prevent the buildup of static electricity.

From static electricity to mine dust to fingerprints, every cleanroom is threatened by its own set of unseen enemies. But they all have one visible enemy in common: us.