Our imperfect vacuum

The emptiest parts of the universe aren’t so empty after all.

In the Large Hadron Collider, two beams of protons race around a 17-mile ring more than 1 million times before slamming into each other inside the massive particle detectors.

But rogue particles inside the beam pipes can pick off protons with premature collisions, reducing the intensity of the beam. As a result, the teams behind the LHC and other physics experiments around the world take great care to scrub their experimental spaces of as many unwanted particles as possible.

Ideally, they would conduct their experiments in a perfect vacuum. The problem is that there’s no such thing.

Even after a thorough evacuating, the LHC’s beam pipes contain about 3 million molecules per cubic centimeter. That density of particles is similar to what you would find 620 miles above Earth.

“In the real world [a perfect vacuum] doesn’t happen,” says Linda Valerio, a mechanical engineer who works on the vacuum system at Fermi National Accelerator Laboratory. “Scientists are able to determine the acceptable level of vacuum required for each experiment, and engineers design the vacuum system to that level. The better the vacuum must be, the more cost and effort associated with achieving and maintaining it."

Humans have been thinking about vacuums for thousands of years. Ancient philosophers called atomists argued that the world was made up of two elements: atoms and the void. Aristotle argued that nature would not allow a void to exist; particles around a vacuum would always move to fill it. And in the early 1700s, Isaac Newton argued that what seemed to be empty space was actually filled with an element called aether, a medium through which light could travel.

Physicists now know that even what appears to be empty space contains particles. Spaces between galaxies are known to contain a few hydrogen atoms per cubic meter.

No space is ever truly empty. Virtual particles pop in and out of existence everywhere. Virtual particles appear in matter-antimatter pairs and annihilate one another almost instantly. But they can interact with actual particles, which is how scientists find evidence of their existence.

Another inhabitant of the void is the faint thermal radiation left over from the big bang. It exists as a pattern of photons called the cosmic microwave background.

“When we think about vacuums, we generally think about [the absence of] particles with mass,” says Seth Digel, a SLAC experimental physicist who works with the Kavli Institute for Particle Astrophysics and Cosmology. “But if you expand the definition to include photons, to include the microwave background, then there isn’t any part of space that’s really empty."

It turns out the universe is a little less lonely than previously thought.

Is the neutrino its own antiparticle?

The mysterious particle could hold the key to why matter won out over antimatter in the early universe.

Almost every particle has an antimatter counterpart: a particle with the same mass but opposite charge, among other qualities.

This seems to be true of neutrinos, tiny particles that are constantly streaming through us. Judging by the particles released when a neutrino interacts with other matter, scientists can tell when they’ve caught a neutrino versus an antineutrino.

But certain characteristics of neutrinos and antineutrinos make scientists wonder: Are they one and the same? Are neutrinos their own antiparticles?

This isn’t unheard of. Gluons and even Higgs bosons are thought to be their own antiparticles. But if scientists discover neutrinos are their own antiparticles, it could be a clue as to where they get their tiny masses—and whether they played a part in the existence of our matter-dominated universe.

Dirac versus Majorana

The idea of the antiparticle came about in 1928 when British physicist Paul Dirac developed what became known as the Dirac equation. His work sought to explain what happened when electrons moved at close to the speed of light. But his calculations resulted in a strange requirement: that electrons sometimes have negative energy.

“When Dirac wrote down his equation, that’s when he learned antiparticles exist,” says André de Gouvêa, a theoretical physicist and professor at Northwestern University. “Antiparticles are a consequence of his equation.”

Physicist Carl Anderson discovered the antimatter partner of the electron that Dirac foresaw in 1932. He called it the positron—a particle like an electron but with a positive charge.

Dirac predicted that, in addition to having opposite charges, antimatter partners should have opposite handedness as well.

A particle is considered right-handed if its spin is in the same direction as its motion. It is considered left-handed if its spin is in the opposite direction.

Dirac’s equation allowed for neutrinos and anti-neutrinos to be different particles, and, as a result, four types of neutrino were possible: left- and right-handed neutrinos and left- and right-handed antineutrinos. But if the neutrinos had no mass, as scientists thought at the time, only left-handed neutrinos and right-handed antineutrinos needed to exist.

In 1937, Italian physicist Ettore Majorana debuted another theory: Neutrinos and antineutrinos are actually the same thing. The Majorana equation described neutrinos that, if they happened to have mass after all, could turn into antineutrinos and then back into neutrinos again.

The matter-antimatter imbalance

Whether neutrino masses were zero remained a mystery until 1998, when the Super-Kamiokande and SNO experiments found they do indeed have very small masses—an achievement recognized with the 2015 Nobel Prize for Physics. Since then, experiments have cropped up across Asia, Europe and North America searching for hints that the neutrino is its own antiparticle.

The key to finding this evidence is something called lepton number conservation. Scientists consider it a fundamental law of nature that lepton number is conserved, meaning that the number of leptons and anti-leptons involved in an interaction should remain the same before and after the interaction occurs.

Scientists think that, just after the big bang, the universe should have contained equal amounts of matter and antimatter. The two types of particles should have interacted, gradually canceling one another until nothing but energy was left behind. Somehow, that’s not what happened.

Finding out that lepton number is not conserved would open up a loophole that would allow for the current imbalance between matter and antimatter. And neutrino interactions could be the place to find that loophole.

Neutrinoless double-beta decay

Scientists are looking for lepton number violation in a process called double beta decay, says SLAC theorist Alexander Friedland, who specializes in the study of neutrinos.

In its common form, double beta decay is a process in which a nucleus decays into a different nucleus and emits two electrons and two antineutrinos. This balances leptonic matter and antimatter both before and after the decay process, so it conserves lepton number.

If neutrinos are their own antiparticles, it’s possible that the antineutrinos emitted during double beta decay could annihilate one another and disappear, violating lepton number conservation. This is called neutrinoless double beta decay.

Such a process would favor matter over antimatter, creating an imbalance.

“Theoretically it would cause a profound revolution in our understanding of where particles get their mass,” Friedland says. “It would also tell us there has to be some new physics at very, very high energy scales—that there is something new in addition to the Standard Model we know and love.”

It’s possible that neutrinos and antineutrinos are different, and that there are two neutrino and anti-neutrino states, as called for in Dirac’s equation. The two missing states could be so elusive that physicists have yet to spot them.

But spotting evidence of neutrinoless double beta decay would be a sign that Majorana had the right idea instead—neutrinos and antineutrinos are the same.

“These are very difficult experiments,” de Gouvêa says. “They’re similar to dark matter experiments in the sense they have to be done in very quiet environments with very clean detectors and no radioactivity from anything except the nucleus you're trying to study."

Physicists are still evaluating their understanding of the elusive particles.

“There have been so many surprises coming out of neutrino physics,” says Reina Maruyama, a professor at Yale University associated with the CUORE neutrinoless double beta decay experiment. “I think it’s really exciting to think about what we don’t know.”

A speed trap for dark matter

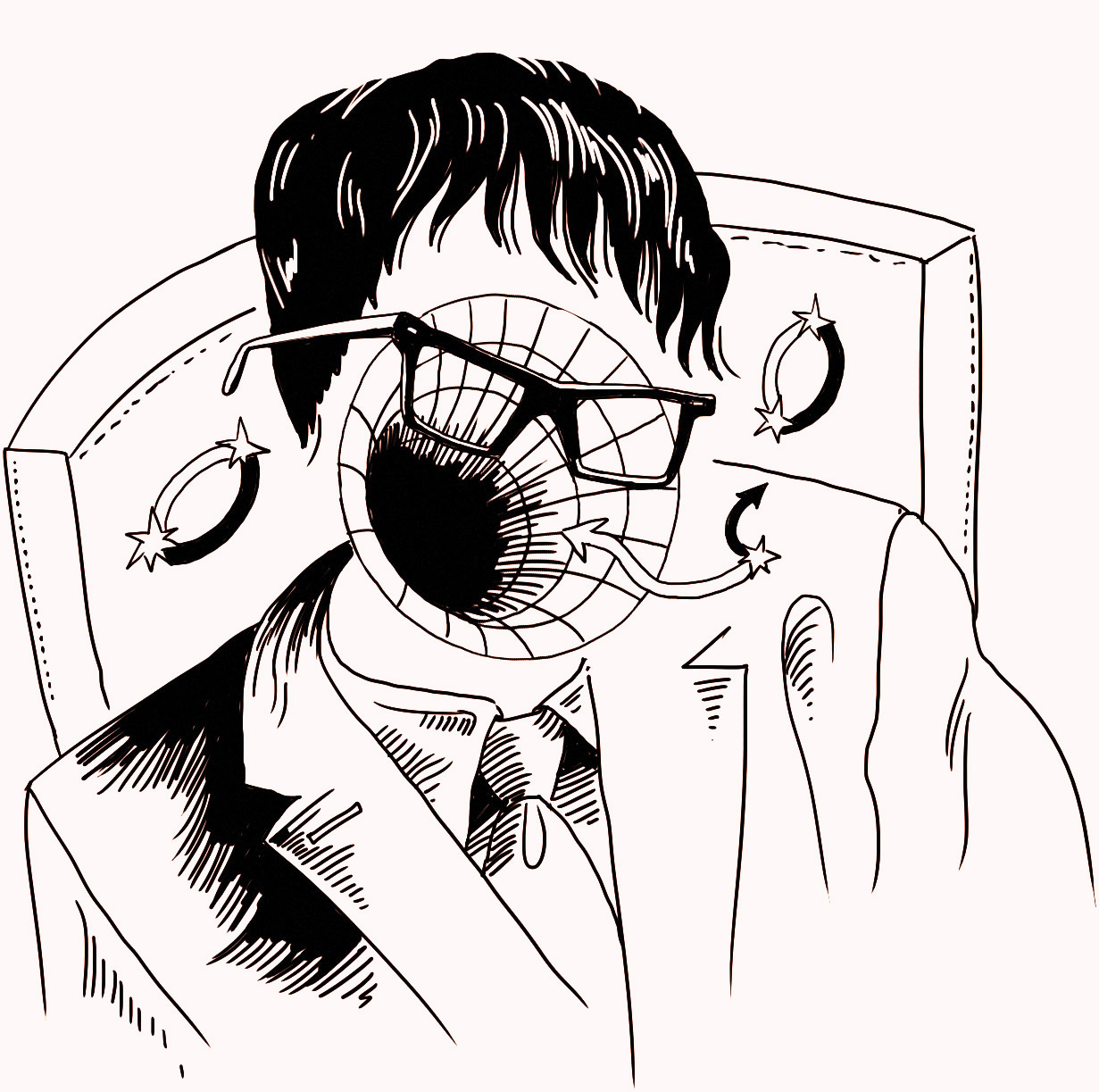

Analyzing the motion of X-ray sources could help researchers identify dark matter signals.

Dark matter or not dark matter? That is the question when it comes to the origin of intriguing X-ray signals scientists have found coming from space.

In a theory paper published today in Physical Review Letters, scientists have suggested a surprisingly simple way of finding the answer: by setting up a speed trap for the enigmatic particles.

Eighty-five percent of all matter in the universe is dark: It doesn’t emit light, nor does it interact much with regular matter other than through gravity.

The nature of dark matter remains one of the biggest mysteries of modern physics. Most researchers believe that the invisible substance is made of fundamental particles, but so far they’ve evaded detection. One way scientists hope to prove their particle assumption is by searching the sky for energetic light that would emerge when dark matter particles decayed or annihilated each other in space.

Over the past couple of years, several groups analyzing data from two X-ray satellites—the European Space Agency’s XMM-Newton and NASA’s Chandra X-ray space observatories—reported the detection of faint X-rays with a well-defined energy of 3500 electronvolts (3.5 keV). The signal emanated from the center of the Milky Way; its nearest neighbor galaxy, Andromeda; and a number of galaxy clusters.

Some scientists believe it might be a telltale sign of decaying dark matter particles called sterile neutrinos—hypothetical heavier siblings of the known neutrinos produced in fusion reactions in the sun, radioactive decays and other nuclear processes. However, other researchers argue that there could be more mundane astrophysical origins such as hot gases.

There might be a straightforward way of distinguishing between the two possibilities, suggest researchers from Ohio State University and the Kavli Institute for Particle Astrophysics and Cosmology, a joint institute of Stanford University and SLAC National Accelerator Laboratory.

It involves taking a closer look at the Doppler shifts of the X-ray signal. The Doppler effect is the shift of a signal to higher or lower frequencies depending on the relative velocity between the signal source and its observer. It’s used, for instance, in roadside speed traps by the police, but it could also help astrophysicists “catch” dark matter particles.

“On average, dark matter moves differently than gas,” says study co-author Ranjan Laha from KIPAC. “Dark matter has random motion, whereas gas rotates with the galaxies to which it is confined. By measuring the Doppler shifts in different directions, we can in principle tell whether a signal—X-rays or any other frequency—stems from decaying dark matter particles or not.”

Researchers would even know if the signal were caused by the observation instrument itself because then the Doppler shift would be zero for all directions

Although a promising approach, it can’t just yet be applied to the 3.5-keV X-rays because the associated Doppler shifts are very small. Current instruments either don’t have enough energy resolution for the analysis or they don’t operate in the right energy range.

However, this situation may change very soon with ASTRO-H, an X-ray satellite of the Japan Aerospace Exploration Agency, whose launch is planned for early this year. As the researchers show in their paper, it will have just the right specifications to return a verdict on the mystery X-ray line. Dark matter had better watch its speed.

Exploring the dark universe with supercomputers

Next-generation telescopic surveys will work hand-in-hand with supercomputers to study the nature of dark energy.

The 2020s could see a rapid expansion in dark energy research.

For starters, two powerful new instruments will scan the night sky for distant galaxies. The Dark Energy Spectroscopic Instrument, or DESI, will measure the distances to about 35 million cosmic objects, and the Large Synoptic Survey Telescope, or LSST, will capture high-resolution videos of nearly 40 billion galaxies.

Both projects will probe how dark energy—the phenomenon that scientists think is causing the universe to expand at an accelerating rate—has shaped the structure of the universe over time.

But scientists use more than telescopes to search for clues about the nature of dark energy. Increasingly, dark energy research is taking place not only at mountaintop observatories with panoramic views but also in the chilly, humming rooms that house state-of-the-art supercomputers.

The central question in dark energy research is whether it exists as a cosmological constant—a repulsive force that counteracts gravity, as Albert Einstein suggested a century ago—or if there are factors influencing the acceleration rate that scientists can’t see. Alternatively, Einstein’s theory of gravity could be wrong.

“When we analyze observations of the universe, we don’t know what the underlying model is because we don’t know the fundamental nature of dark energy,” says Katrin Heitmann, a senior physicist at Argonne National Laboratory. “But with computer simulations, we know what model we’re putting in, so we can investigate the effects it would have on the observational data.”

Growing a universe

Heitmann and her Argonne colleagues use their cosmology code, called HACC, on supercomputers to simulate the structure and evolution of the universe. The supercomputers needed for these simulations are built from hundreds of thousands of connected processors and typically crunch well over a quadrillion calculations per second.

The Argonne team recently finished a high-resolution simulation of the universe expanding and changing over 13 billion years, most of its lifetime. Now the data from their simulations is being used to develop processing and analysis tools for the LSST, and packets of data are being released to the research community so cosmologists without access to a supercomputer can make use of the results for a wide range of studies.

Risa Wechsler, a scientist at SLAC National Accelerator Laboratory and Stanford University professor, is the co-spokesperson of the DESI experiment. Wechsler is producing simulations that are being used to interpret measurements from the ongoing Dark Energy Survey, as well as to develop analysis tools for future experiments like DESI and LSST.

“By testing our current predictions against existing data from the Dark Energy Survey, we are learning where the models need to be improved for the future,” Wechsler says. “Simulations are our key predictive tool. In cosmological simulations, we start out with an early universe that has tiny fluctuations, or changes in density, and gravity allows those fluctuations to grow over time. The growth of structure becomes more and more complicated and is impossible to calculate with pen and paper. You need supercomputers.”

Supercomputers have become extremely valuable for studying dark energy because—unlike dark matter, which scientists might be able to create in particle accelerators—dark energy can only be observed at the galactic scale.

“With dark energy, we can only see its effect between galaxies,” says Peter Nugent, division deputy for scientific engagement at the Computational Cosmology Center at Lawrence Berkeley National Laboratory.

Trial and error bars

“There are two kinds of errors in cosmology,” Heitmann says. “Statistical errors, meaning we cannot collect enough data, and systematic errors, meaning that there is something in the data that we don’t understand.”

Computer modeling can help reduce both.

DESI will collect about 10 times more data than its predecessor, the Baryon Oscillation Spectroscopic Survey, and LSST will generate 30 laptops’ worth of data each night. But even these enormous data sets do not fully eliminate statistical error. Simulation can support observational evidence by modeling similar conditions to see if the same results appear consistently.

“We’re basically creating the same size data set as the entire observational set, then we’re creating it again and again—producing up to 10 to 100 times more data than the observational sets,” Nugent says.

Processing such large amounts of data requires sophisticated analyses. Simulations make this possible.

To program the tools that will compare observational and simulated data, researchers first have to model what the sky will look like through the lens of the telescope. In the case of LSST, this is done before the telescope is even built.

After populating a simulated universe with galaxies that are similar in distribution and brightness to real galaxies, scientists modify the results to account for the telescope’s optics, Earth’s atmosphere, and other limiting factors. By simulating the end product, they can efficiently process and analyze the observational data.

Simulations are also an ideal way to tackle many sources of systematic error in dark energy research. By all appearances, dark energy acts as a repulsive force. But if other, inconsistent properties of dark energy emerge in new data or observations, different theories and a way of validating them will be needed.

“If you want to look at theories beyond the cosmological constant, you can make predictions through simulation,” Heitmann says.

A conventional way to test new scientific theories is to introduce change into a system and compare it to a control. But in the case of cosmology, we are stuck in our universe, and the only way scientists may be able to uncover the nature of dark energy—at least in the foreseeable future—is by unleashing alternative theories in a virtual universe.

Black holes

Let yourself be pulled into the weird world of black holes.

Imagine, somewhere in the galaxy, the corpse of a star so dense that it punctures the fabric of space and time. So dense that it devours any surrounding matter that gets too close, pulling it into a riptide of gravity that nothing, not even light, can escape.

And once matter crosses over the point of no return, the event horizon, it spirals helplessly toward an almost infinitely small point, a point where spacetime is so curved that all our theories break down: the singularity. No one gets out alive.

Black holes sound too strange to be real. But they are actually pretty common in space. There are dozens known and probably millions more in the Milky Way and a billion times that lurking outside. Scientists also believe there could be a supermassive black hole at the center of nearly every galaxy, including our own. The makings and dynamics of these monstrous warpings of spacetime have been confounding scientists for centuries.

A history of black holes

It all started in England in 1665, when an apple broke from the branch of a tree and fell to the ground. Watching from his garden at Woolsthorpe Manor, Isaac Newton began thinking about the apple’s descent: a line of thought that, two decades later, ended with his conclusion that there must be some sort of universal force governing the motion of apples and cannonballs and even planetary bodies. He called it gravity.

Newton realized that any object with mass would have a gravitational pull. He found that as mass increases, gravity increases. To escape an object’s gravity, you would need to reach its escape velocity. To escape the gravity of Earth, you would need to travel at a rate of roughly 11 kilometers per second.

It was Newton’s discovery of the laws of gravity and motion that, 100 years later, led Reverend John Michell, a British polymath, to the conclusion that if there were a star much more massive or much more compressed than the sun, its escape velocity could surpass even the speed of light. He called these objects “dark stars.” Twelve years later, French scientist and mathematician Pierre Simon de Laplace arrived at the same conclusion and offered mathematical proof for the existence of what we now know as black holes.

In 1915, Albert Einstein set forth the revolutionary theory of general relativity, which regarded space and time as a curved four-dimensional object. Rather than viewing gravity as a force, Einstein saw it as a warping of space and time itself. A massive object, such as the sun, would create a dent in spacetime, a gravitational well, causing any surrounding objects, such as the planets in our solar system, to follow a curved path around it.

A month after Einstein published this theory, German physicist Karl Schwarzschild discovered something fascinating in Einstein’s equations. Schwarzschild found a solution that led scientists to the conclusion that a region of space could become so warped that it would create a gravitational well that no object could escape.

Up until 1967, these mysterious regions of spacetime had not been granted a universal title. Scientists tossed around terms like “collapsar” or “frozen star” when discussing the dark plots of inescapable gravity. At a conference in New York, physicist John Wheeler popularized the term “black hole.”

How to find a black hole

During star formation, gravity compresses matter until it is stopped by the star’s internal pressure. If the internal pressure does not stop the compression, it can result in the formation of a black hole.

Some black holes are formed when massive stars collapse. Others, scientists believe, were formed very early in the universe, a billion years after the big bang.

There is no limit to how immense a black hole can be, sometimes more than a billion times the mass of the sun. According to general relativity, there is also no limit to how small they can be (although quantum mechanics suggests otherwise). Black holes grow in mass as they continue to devour their surrounding matter. Smaller black holes accrete matter from a companion star while the larger ones feed off of any matter that gets too close.

Black holes contain an event horizon, beyond which not even light can escape. Because no light can get out, it is impossible to see beyond this surface of a black hole. But just because you can’t see a black hole, doesn’t mean you can’t detect one.

Scientists can detect black holes by looking at the motion of stars and gas nearby as well as matter accreted from its surroundings. This matter spins around the black hole, creating a flat disk called an accretion disk. The whirling matter loses energy and gives off radiation in the form of X-rays and other electromagnetic radiation before it eventually passes the event horizon.

This is how astronomers identified Cygnus X-1 in 1971. Cygnus X-1 was found as part of a binary star system in which an extremely hot and bright star called a blue supergiant formed an accretion disk around an invisible object. The binary star system was emitting X-rays, which are not usually produced by blue supergiants. By calculating how far and fast the visible star was moving, astronomers were able to calculate the mass of the unseen object. Although it was compressed into a volume smaller than the Earth, the object’s mass was more than six times as heavy as our sun.

Several different experiments study black holes. The Event Horizon Telescope will look at black holes in the nucleus of our galaxy and a nearby galaxy, M87. Its resolution is high enough to image flowing gas around the event horizon.

Scientists can also do reverberation mapping, which uses X-ray telescopes to look for time differences between emissions from various locations near the black hole to understand the orbits of gas and photons around the black hole.

The Laser Interferometer Gravitational-Wave Observatory, or LIGO, seeks to identify the merger of two black holes, which would emit gravitational radiation, or gravitational waves, as the two black holes merge.

In addition to accretion disks, black holes also have winds and incredibly bright jets erupting from them along their rotation axis, shooting out matter and radiation at nearly the speed of light. Scientists are still working to understand how these jets form.

What we don’t know

Scientists have learned that black holes are not as black as they once thought them to be. Some information might escape them. In 1974, Stephen Hawking published results that showed that black holes should radiate energy, or Hawking radiation.

Matter-antimatter pairs are constantly being produced throughout the universe, even outside the event horizon of a black hole. Quantum theory predicts that one particle might be dragged in before the pair has a chance to annihilate, and the other might escape in the form of Hawking radiation. This contradicts the picture general relativity paints of a black hole from which nothing can escape.

But as a black hole radiates Hawking radiation, it slowly evaporates until it eventually vanishes. So what happens to all the information encoded on its horizon? Does it disappear, which would violate quantum mechanics? Or is it preserved, as quantum mechanics would predict? One theory is that the Hawking radiation contains all of that information. When the black hole evaporates and disappears, it has already preserved the information of everything that fell into it, radiating it out into the universe.

Black holes give scientists an opportunity to test general relativity in very extreme gravitational fields. They see black holes as an opportunity to answer one of the biggest questions in particle physics theory: Why can’t we square quantum mechanics with general relativity?

Beyond the event horizon, black holes curve into one of the darkest mysteries in physics. Scientists can’t explain what happens when objects cross the event horizon and spiral towards the singularity. General relativity and quantum mechanics collide and Einstein’s equations explode into infinities. Black holes might even house gateways to other universes called wormholes and violent fountains of energy and matter called white holes, though it seems very unlikely that nature would allow these structures to exist.

Sometimes reality is stranger than fiction.

How to wrangle a particle

Learn some particle accelerator basics from a Fermilab accelerator operator.

How do you keep a particle inside of an accelerator? Fermilab accelerator operator Cindy Joe explains.

Have a burning question about particle physics? Let us know via email or Twitter (using the hashtag #asksymmetry). We might answer you in a future video!

The booming science of dwarf galaxies

A recent uptick in the discovery of the smallest, oldest galaxies benefits studies of dark matter, galaxy formation and the evolution of the universe.

Galaxies are commonly perceived as gigantic spirals full of billions to trillions of stars. Yet some galaxies, called dwarf galaxies, can harbor just a few hundred suns.

The recent discovery of 20 new potential dwarf galaxies fueled a boom in the science of these faint objects, which are valuable tools to study dark matter, galaxy formation and cosmic history.

Ten years ago, only about a dozen dwarf galaxies were known. This number quickly doubled after the Sloan Digital Sky Survey began its second phase of operation in 2005. SDSS-II took better than ever images of the sky, and researchers started using computer programs to identify dwarf galaxies in them.

The number of potential dwarf galaxies has spiked of late, largely due to results from the first two years of the new Dark Energy Survey, which can see objects 10 times as faint. The total number of known dwarf galaxy candidates orbiting our Milky Way—not all of them have been confirmed as galaxies yet—has now reached about 50.

“The precise number is being updated on almost a weekly basis during recent months,” says Keith Bechtol of the University of Wisconsin, Madison, one of the lead authors of two DES papers, published in March and August, announcing the discoveries of potential satellite galaxies. “These are truly exciting times for this type of research.”

Dim lights for dark matter research

In general, the term “dwarf galaxy” refers to a galaxy that is smaller than a tenth of the size of our Milky Way, which is made of 100 billion stars. So not all dwarf galaxies are truly dwarfish. In fact, two of these objects in the southern night sky, called the Magellanic Clouds, are so large that they are visible to the naked eye.

However, researchers are particularly interested in the faintest dwarf galaxies. They make excellent laboratories in which to study dark matter—the invisible form of matter that is five times more prevalent than its visible counterpart but whose nature remains a mystery.

Scientists’ best guess is that it’s made of fundamental particles, with hypothetical weakly interacting massive particles, or WIMPs, as the top contenders. Researchers think WIMPs could produce gamma rays as they decay or annihilate each other in space. They’re searching for this radiation with sensitive gamma-ray telescopes.

Ultra-faint dwarf galaxies orbiting the Milky Way are ideal targets for this search for two reasons. First, because they have high ratios of dark matter to regular matter and are relatively close to us, they could produce detectable dark matter signals.

“The motions of stars in ultra-faint galaxies are so fast that they are best explained if there is 100 to 1000 times more dark matter than the masses of all the stars taken together,” Bechtol says.

Second, these galaxies are the oldest known galaxies. Their busiest days are in the past; most formed their stars more than 10 billion years ago. This, together with the fact that they have few stars and little gas, makes them very “clean” objects for the dark matter search.

By contrast, in the also dark-matter-rich center of the much younger Milky Way, stars are still forming and many other astrophysical processes are producing gamma-ray signals that could obscure signs of dark matter.

“If we ever saw gamma rays coming from these ultra-faint galaxies, it would be a smoking gun for dark matter,” says researcher Andrea Albert of the Kavli Institute for Particle Astrophysics and Cosmology, a joint institute of Stanford University and the SLAC National Accelerator Laboratory. She is involved in the dark matter analysis of the recently discovered DES dwarf galaxy candidates with the Fermi Gamma-ray Space Telescope.

No convincing sign of WIMPs has yet been found coming from dwarf galaxies, including the most recent DES candidate dwarf galaxies, as preliminary results presented at the 2015 Topics in Astroparticle and Underground Physics conference in Torino, Italy, suggest.

But even the absence of a signal is progress because it sets limits on what dark matter can and cannot be.

Excavation tools for astrophysical archeology

Researchers also study dwarf galaxies because they hope to learn about the history of our cosmic neighborhood and the formation of galaxies like our own.

Current models suggest that galaxies don’t start out as enormous objects with a gazillion of stars, but rather as small structures that merge with others into larger ones. Dwarf galaxies are at the bottom of this hierarchy and are believed to be the building blocks of larger galaxies.

“The way we see the Milky Way and its satellites today is only a snapshot in time,” says astronomer Marla Geha of Yale University. “Our own galaxy is a merger of smaller galaxies, and it’s still merging.”

In other words, the Milky Way itself may have started out as a dwarf galaxy with only a few hundred to thousand stars. Today, it is a large galaxy, and simulations suggest that in 4 to 5 billion years the Milky Way will merge with the Andromeda Galaxy, the nearest major galaxy in our cosmic neighborhood.

But dwarf galaxies can give us insight into more than just our local neighborhood. By better understanding dwarf galaxies, researchers can also study the evolution of the whole universe.

Since dark matter is abundant and interacts gravitationally with itself and regular matter, it has influenced the cosmos and its structures ever since the big bang. In fact, we know today that galaxies are embedded in clumps, or halos, of dark matter that have formed in the expanding universe. These halos, in turn, are surrounded by smaller halos that harbor dwarf satellite galaxies.

“The discovery of an increasing number of dwarf galaxies is exciting because our cosmological theories predict that the Milky Way has a few hundred of them,” Geha says. “If we don’t find enough satellites, we will need to adjust our models. However, considering that we haven’t surveyed the entire sky yet and haven’t been looking deeply enough, we’re mostly on track for our predictions.”

Some of the dwarf galaxy candidates discovered by DES earlier this year could potentially orbit the Magellanic Clouds, the largest satellites of the Milky Way. If confirmed, the result would be quite fascinating, says KIPAC researcher Risa Wechsler.

“Satellites of satellites are predicted by our models of dark matter,” she says. “Either we’re seeing these types of systems for the first time, or there is something we don’t understand about how these satellite galaxies are distributed in the sky.”

A better and better view

So far, studies of dwarf galaxies have largely been restricted to satellites of our Milky Way, and researchers believe that much could be learned from studying more distant ones.

“One question we would like to answer is why the faintest dwarf galaxies are so extreme in size, age and dark matter content,” Geha says. “Is this because the ones we can observe are affected by their proximity to the Milky Way, or are these properties common to all dwarf galaxies in the universe?”

For the ultimate test, researchers want to be able to detect even fainter objects and look farther into space than they can with DES. They’ll be able to do so once the Large Synoptic Survey Telescope will come online in 2022. The telescope’s 3.2-gigapixel camera will produce the deepest views of the night sky ever observed.

And if that’s not enough, NASA is planning a space mission called the Wide-Field Infrared Survey Telescope, which could spot ultra-faint dwarf galaxies that evade even LSST’s watchful eye.

CERN and US increase cooperation

The United States and the European physics laboratory have formally agreed to partner on continued LHC research, upcoming neutrino research and a future collider.

Today in a ceremony at CERN, US Ambassador to the United Nations Pamela Hamamoto and CERN Director-General Rolf Heuer signed five formal agreements that will serve as the framework for future US-CERN collaboration.

These protocols augment the US-CERN cooperation agreement signed in May 2015 in a White House ceremony and confirm the United States’ continued commitment to research at the Large Hadron Collider. They also officially expand the US-CERN partnership to include work on a US-based neutrino research program and on the study of a future circular collider at CERN.

“This is truly a good day for the relationship between CERN and the United States,” says Hamamoto, US permanent representative to the United Nations in Geneva. “By working together across borders and cultures, we challenge our knowledge and push back the frontiers of the unknown.”

The partnership between the United States and CERN dates back to the 1950s, when American scientist Isidor Rabi served as one of CERN’s founding members.

“Today’s agreements herald a new era in CERN-US collaboration in particle physics,” Heuer says. “They confirm the US commitment to the LHC project, and for the first time, they set down in black and white European participation through CERN in pioneering neutrino research in the US. They are a significant step towards a fully connected trans-Atlantic research program.”

Today, the United States is the most represented nation in both the ATLAS and CMS collaborations at the LHC. Its contributions are sponsored through the US Department of Energy’s Office of Science and the National Science Foundation.

According to the new protocols, the United States will continue to support the LHC program through participation in the ATLAS, CMS and ALICE experiments. The LHC Accelerator Research Program, an R&D partnership between five US national laboratories, plans to develop powerful new magnets and accelerating cavities for an upgrade to the accelerator called the High-Luminosity LHC, scheduled to begin at the end of this decade.

In addition, a joint neutrino-research protocol will enable a new type of reciprocal relationship to blossom between CERN and the US.

“The CERN neutrino platform is an important development for CERN,” says Marzio Nessi, its coordinator. “It embodies CERN’s undertaking to foster and contribute to fundamental research in neutrino physics at particle accelerators worldwide, notably in the US.”

The agreement will enable scientists and engineers working at CERN to participate in the design and development of technology for the Deep Underground Neutrino Experiment, a Fermilab-hosted experiment that will explore the mystery of neutrino oscillations and neutrino mass.

For the first time, CERN will serve as a platform for scientists participating in a major research program hosted on another continent. CERN will serve as a European base for scientists working the DUNE experiment and on short-baseline neutrino research projects also hosted by the United States.

Finally, the protocols pave the way beyond the LHC research program. The United States and CERN will collaborate on physics and technology studies aimed at the development of a proposed new circular accelerator, with the aim of reaching seven times higher energies than the LHC.

The protocols take effect immediately and will be renewed automatically on a five-year basis.