Physicists build ultra-powerful accelerator magnet

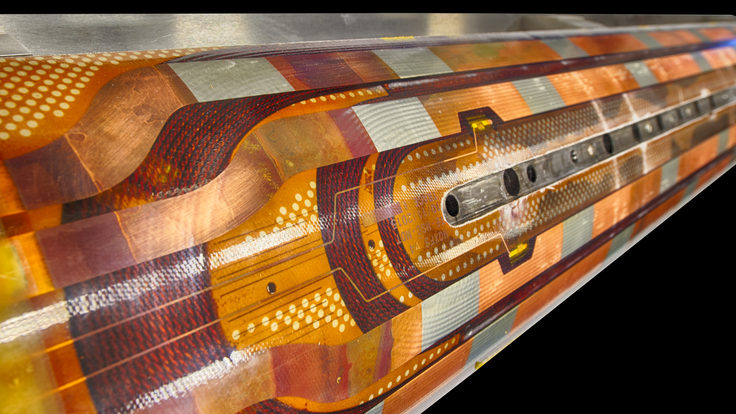

An international partnership to upgrade the LHC has yielded the strongest accelerator magnet ever created.

The next generation of cutting-edge accelerator magnets is no longer just an idea. Recent tests revealed that the United States and CERN have successfully co-created a prototype superconducting accelerator magnet that is much more powerful than those currently inside the Large Hadron Collider. Engineers will incorporate more than 20 magnets similar to this model into the next iteration of the LHC, which will take the stage in 2026 and increase the LHC’s luminosity by a factor of ten. That translates into a ten-fold increase in the data rate.

“Building this magnet prototype was truly an international effort,” says Lucio Rossi, the head of the High-Luminosity (HighLumi) LHC project at CERN. “Half the magnetic coils inside the prototype were produced at CERN, and half at laboratories in the United States.”

During the original construction of the Large Hadron Collider, US Department of Energy national laboratories foresaw the future need for stronger LHC magnets and created the LHC Accelerator Research Program (LARP): an R&D program committed to developing new accelerator technology for future LHC upgrades.

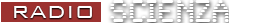

This 1.5-meter-long model, which is a fully functioning accelerator magnet, was developed by scientists and engineers at Fermilab, Brookhaven National Laboratory, Lawrence Berkeley National Laboratory, and CERN. The magnet recently underwent an intense testing program at Fermilab, which it passed in March with flying colors. It will now undergo a rigorous series of endurance and stress tests to simulate the arduous conditions inside a particle accelerator.

This new type of magnet will replace about 5 percent of the LHC’s focusing and steering magnets when the accelerator is converted into the High-Luminosity LHC, a planned upgrade which will increase the number and density of protons packed inside the accelerator. The HL-LHC upgrade will enable scientists to collect data at a much faster rate.

The LHC’s magnets are made by repeatedly winding a superconducting cable into long coils. These coils are then installed on all sides of the beam pipe and encased inside a superfluid helium cryogenic system. When cooled to 1.9 Kelvin, the coils can carry a huge amount of electrical current with zero electrical resistance. By modulating the amount of current running through the coils, engineers can manipulate the strength and quality of the resulting magnetic field and control the particles inside the accelerator.

The magnets currently inside the LHC are made from niobium titanium, a superconductor that can operate inside a magnetic field of up to 10 teslas before losing its superconducting properties. This new magnet is made from niobium-three tin (Nb3Sn), a superconductor capable of carrying current through a magnetic field of up to 20 teslas.

“We’re dealing with a new technology that can achieve far beyond what was possible when the LHC was first constructed,” says Giorgio Apollinari, Fermilab scientist and Director of US LARP. “This new magnet technology will make the HL-LHC project possible and empower physicists to think about future applications of this technology in the field of accelerators.”

This technology is powerful and versatile—like upgrading from a moped to a motorcycle. But this new super material doesn’t come without its drawbacks.

“Niobium-three tin is much more complicated to work with than niobium titanium,” says Peter Wanderer, head of the Superconducting Magnet Division at Brookhaven National Lab. “It doesn’t become a superconductor until it is baked at 650 degrees Celsius. This heat-treatment changes the material’s atomic structure and it becomes almost as brittle as ceramic.”

Building a moose-sized magnet from a material more fragile than a teacup is not an easy endeavor. Scientists and engineers at the US national laboratories spent 10 years designing and perfecting a new and internationally reproducible process to wind, form, bake and stabilize the coils.

“The LARP-CERN collaboration works closely on all aspects of the design, fabrication and testing of the magnets,” says Soren Prestemon of the Berkeley Center for Magnet Technology at Berkeley Lab. “The success is a testament to the seamless nature of the collaboration, the level of expertise of the teams involved, and the ownership shown by the participating laboratories.”

This model is a huge success for the engineers and scientists involved. But it is only the first step toward building the next big supercollider.

“This test showed that it is possible,” Apollinari says. “The next step is it to apply everything we’ve learned moving from this prototype into bigger and bigger magnets.”

Six weighty facts about gravity

Perplexed by gravity? Don’t let it get you down.

Gravity: we barely ever think about it, at least until we slip on ice or stumble on the stairs. To many ancient thinkers, gravity wasn’t even a force—it was just the natural tendency of objects to sink toward the center of Earth, while planets were subject to other, unrelated laws.

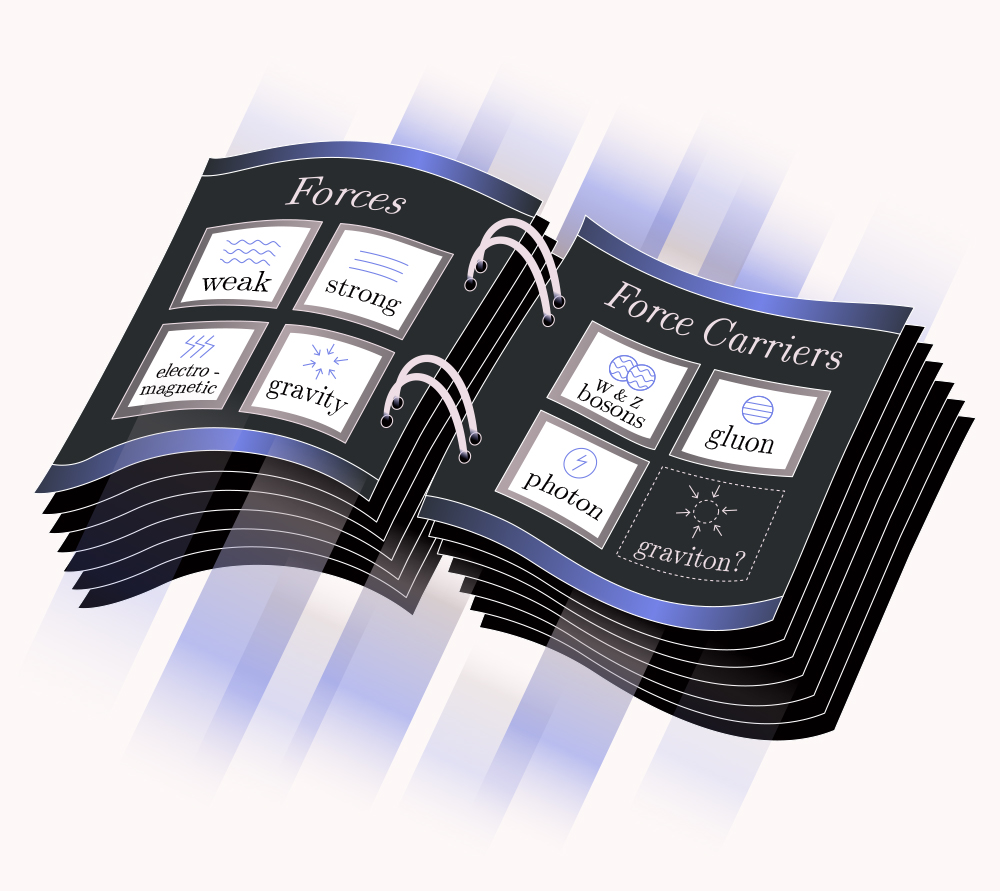

Of course, we now know that gravity does far more than make things fall down. It governs the motion of planets around the Sun, holds galaxies together and determines the structure of the universe itself. We also recognize that gravity is one of the four fundamental forces of nature, along with electromagnetism, the weak force and the strong force.

The modern theory of gravity—Einstein’s general theory of relativity—is one of the most successful theories we have. At the same time, we still don’t know everything about gravity, including the exact way it fits in with the other fundamental forces. But here are six weighty facts we do know about gravity.

1. Gravity is by far the weakest force we know.

Gravity only attracts—there’s no negative version of the force to push things apart. And while gravity is powerful enough to hold galaxies together, it is so weak that you overcome it every day. If you pick up a book, you’re counteracting the force of gravity from all of Earth.

For comparison, the electric force between an electron and a proton inside an atom is roughly one quintillion (that’s a one with 30 zeroes after it) times stronger than the gravitational attraction between them. In fact, gravity is so weak, we don’t know exactly how weak it is.

2. Gravity and weight are not the same thing.

Astronauts on the space station float, and sometimes we lazily say they are in zero gravity. But that’s not true. The force of gravity on an astronaut is about 90 percent of the force they would experience on Earth. However, astronauts are weightless, since weight is the force the ground (or a chair or a bed or whatever) exerts back on them on Earth.

Take a bathroom scale onto an elevator in a big fancy hotel and stand on it while riding up and down, ignoring any skeptical looks you might receive. Your weight fluctuates, and you feel the elevator accelerating and decelerating, yet the gravitational force is the same. In orbit, on the other hand, astronauts move along with the space station. There is nothing to push them against the side of the spaceship to make weight. Einstein turned this idea, along with his special theory of relativity, into general relativity.

3. Gravity makes waves that move at light speed.

General relativity predicts gravitational waves. If you have two stars or white dwarfs or black holes locked in mutual orbit, they slowly get closer as gravitational waves carry energy away. In fact, Earth also emits gravitational waves as it orbits the sun, but the energy loss is too tiny to notice.

We’ve had indirect evidence for gravitational waves for 40 years, but the Laser Interferometer Gravitational-wave Observatory (LIGO) only confirmed the phenomenon this year. The detectors picked up a burst of gravitational waves produced by the collision of two black holes more than a billion light-years away.

One consequence of relativity is that nothing can travel faster than the speed of light in vacuum. That goes for gravity, too: If something drastic happened to the sun, the gravitational effect would reach us at the same time as the light from the event.

4. Explaining the microscopic behavior of gravity has thrown researchers for a loop.

The other three fundamental forces of nature are described by quantum theories at the smallest of scales— specifically, the Standard Model. However, we still don’t have a fully working quantum theory of gravity, though researchers are trying.

One avenue of research is called loop quantum gravity, which uses techniques from quantum physics to describe the structure of space-time. It proposes that space-time is particle-like on the tiniest scales, the same way matter is made of particles. Matter would be restricted to hopping from one point to another on a flexible, mesh-like structure. This allows loop quantum gravity to describe the effect of gravity on a scale far smaller than the nucleus of an atom.

A more famous approach is string theory, where particles—including gravitons—are considered to be vibrations of strings that are coiled up in dimensions too small for experiments to reach. Neither loop quantum gravity nor string theory, nor any other theory is currently able to provide testable details about the microscopic behavior of gravity.

5. Gravity might be carried by massless particles called gravitons.

In the Standard Model, particles interact with each other via other force-carrying particles. For example, the photon is the carrier of the electromagnetic force. The hypothetical particles for quantum gravity are gravitons, and we have some ideas of how they should work from general relativity. Like photons, gravitons are likely massless. If they had mass, experiments should have seen something—but it doesn’t rule out a ridiculously tiny mass.

6. Quantum gravity appears at the smallest length anything can be.

Gravity is very weak, but the closer together two objects are, the stronger it becomes. Ultimately, it reaches the strength of the other forces at a very tiny distance known as the Planck length, many times smaller than the nucleus of an atom.

That’s where quantum gravity’s effects will be strong enough to measure, but it’s far too small for any experiment to probe. Some people have proposed theories that would let quantum gravity show up at close to the millimeter scale, but so far we haven’t seen those effects. Others have looked at creative ways to magnify quantum gravity effects, using vibrations in a large metal bar or collections of atoms kept at ultracold temperatures.

It seems that, from the smallest scale to the largest, gravity keeps attracting scientists’ attention. Perhaps that’ll be some solace the next time you take a tumble, when gravity grabs your attention too.

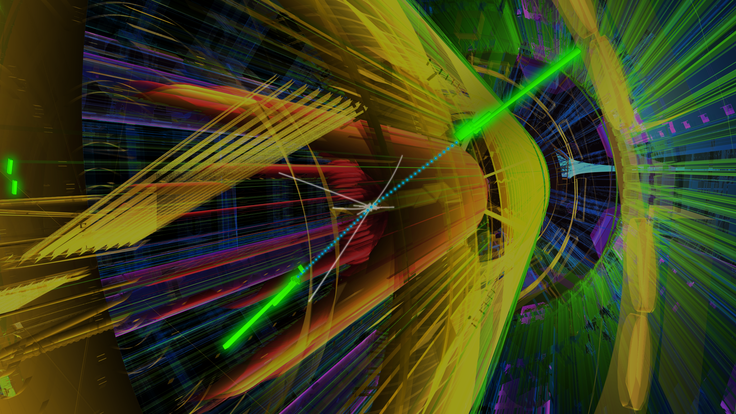

Belle II and the matter of antimatter

Go inside the new detector looking for why we’re here.

We live in a world full of matter: stars made of matter, planets made of matter, pizza made of matter. But why is there pizza made of matter rather than pizza made of antimatter or, indeed, no pizza at all?

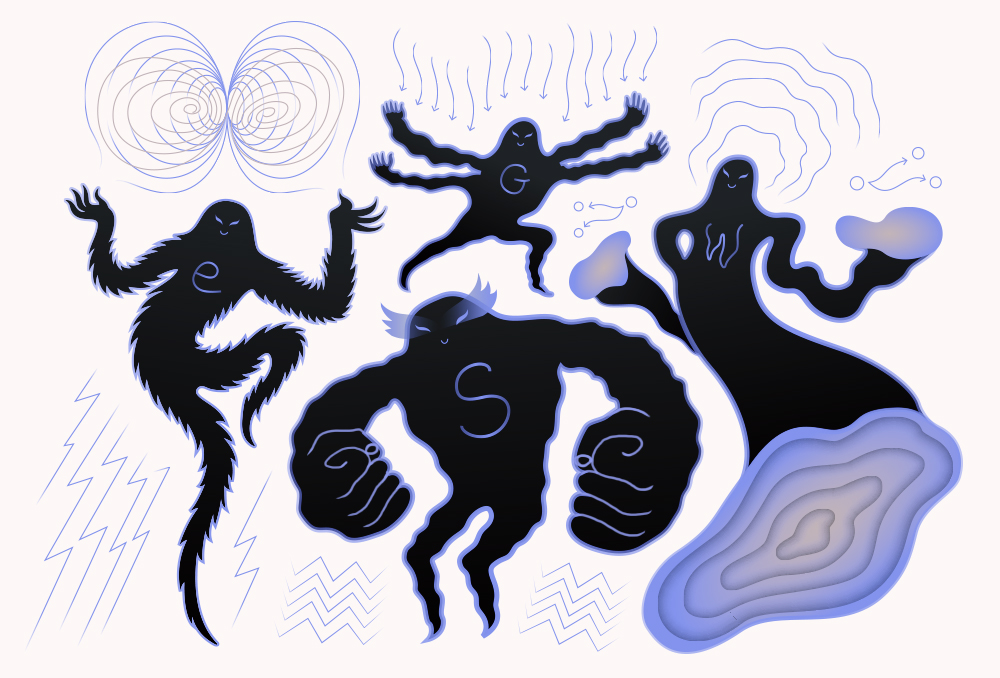

In the first split-second after the big bang, the universe made a smidgen more matter than antimatter. Instead of matter and antimatter annihilating one another and leaving an empty, cold universe, we ended up with a surplus of stuff. Now scientists need the most sensitive detectors and mountains of experimental data to understand where that imbalance comes from.

Belle II is one of those detectors that will look for differences between matter and antimatter to explain why we’re here at all. Currently under construction, the 7.5-meter-long detector will be installed in the newly recommissioned SuperKEKB particle accelerator located in Tsukuba, Japan. SuperKEKB runs beams of electrons and positrons (the antimatter version of electrons) into each other at close to the speed of light, and Belle II—once it is fully operational in 2018—will analyze the detritus of the collisions.

“All the experimental results to this point have been consistent with the so-called Standard Model of particle physics,” says Tom Browder, a physicist at the University of Hawaii and one of the spokespeople for the project. But while the Standard Model allows for some asymmetry, it doesn’t explain the matter-antimatter imbalance that exists. We need something more.

Belle II will look for the signatures of new physics in the rare decays of bottom quarks, charm quarks and tau leptons. (Bottom quarks are also known as beauty quarks, which is the “B” in SuperKEKB; the name “Belle” itself refers to “beauty”). Bottom and charm quarks are massive compared with the up and down quarks that make up ordinary matter, while tau leptons are the much heavier cousins of electrons. All three particles are unstable, decaying into a variety of lower-mass particles. If Belle II researchers spot a difference in the decays of these particles and their antimatter counterparts, it could explain why we ended up in a cosmos full of matter.

Finding the beauty is a beast

When electrons and positrons collide at low energy, they annihilate and convert all of their mass into gamma rays. At very high speed, however, the extra energy produces pairs of matter and antimatter particles, all of which are more massive than the original electrons. SuperKEKB smashes electrons and positrons together with the right energy to make B-mesons, particles made of a bottom quark and an antimatter quark of another type, along with anti-B-mesons, made of a bottom anti-quark and a matter quark.

These mesons change into other particles in complex ways as the bottom quarks and antiquarks decay. Belle II’s detectors will try to find decays that either aren’t allowed by the Standard Model or happen more or less often than expected. Any such deviations could be signs of new physics. The detector can also help physicists better understand particles made of four or five quarks (tetraquarks and pentaquarks) or stuck-together “molecules” of quarks.

“The cleaner environment at Belle II might make it easier to study some of those states, and to try to understand what the internal quark structure is,” says James Fast of the Department of Energy’s Pacific Northwest National Laboratory, lead lab for the US contributions to the Belle II detector upgrade.

SuperKEKB collides electrons and positrons, which aren’t made of anything smaller. This results in a clean collision. And because the energy going into each collision at SuperKEKB is well known, Belle II can study decays with invisible particles such as neutrinos by looking for the missing energy they carry away.

“The cleanliness of data at SuperKEKB enables the majority of B[-meson] events to be recorded,” says Kay Kinoshita of the University of Cincinnati, who works on the software Belle II will use to analyze collisions.

But Belle II isn’t the only detector searching for these rare bottom quark decays. An experiment at the LHC, LHCb, is also on the hunt.

The LHC produces a wider variety of particles containing bottom quarks. That includes a type that decays into two muons, “which is a 'golden' mode for effects from supersymmetry and theories with multiple Higgs bosons,” says Harry Cliff, a physicist at the University of Cambridge who works on LHCb.

Race to the bottom

Belle II is the aptly named successor to the Belle experiment and is designed to handle as much as 50 times the number of collisions in the previous design. It’s a monumental effort involving hundreds of physicists and engineers from 23 nations in Asia, Europe and North America.

“The amount of data that Belle II will collect can be comparable to data management challenges that are faced by the big LHC experiments [like CMS and ATLAS],” says Fast. Universities don’t have the resources to operate the computers needed to manage all the data coming from Belle II, so a national lab like PNNL is an ideal host. Similar data centers for Belle II will operate in Japan and Europe.

At present, the SuperKEKB accelerator is successfully storing both electrons and positrons to prepare for the tests that will lead to new experiments. The Belle II assembly will be in place next year, followed by a commissioning process to make sure everything is working properly. In 2018, the full experiment will be operational and producing data to find exotic B-meson behavior.

It may feel ironic to take years to recreate what the universe did in a split second, but such is the nature of particle physics. The process of smashing electrons and positrons together isn’t identical to the process that created the early cosmos either, but if there’s any new physics hiding in the decays of bottom quarks, this is the type of experiment that could find it. Which is, after all, the beauty of science.

The Milky Way’s hot spot

The center of our galaxy is a busy place. But it might be one of the best sites to hunt for dark matter.

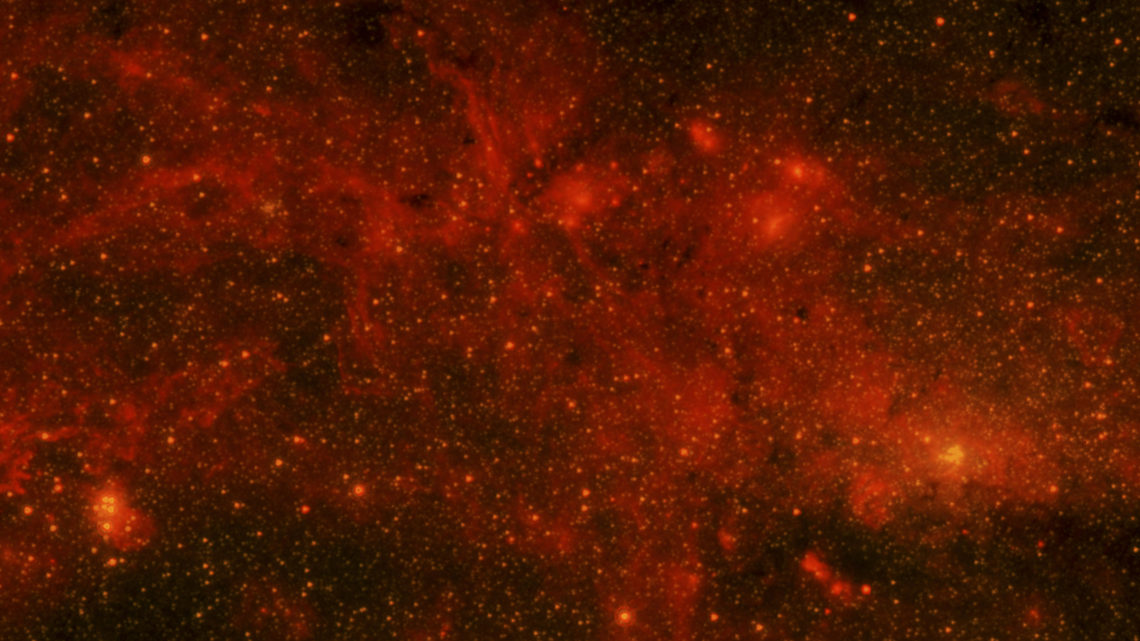

When you look up at night, the Milky Way appears as a swarm of stars arranged in a misty white band across the sky.

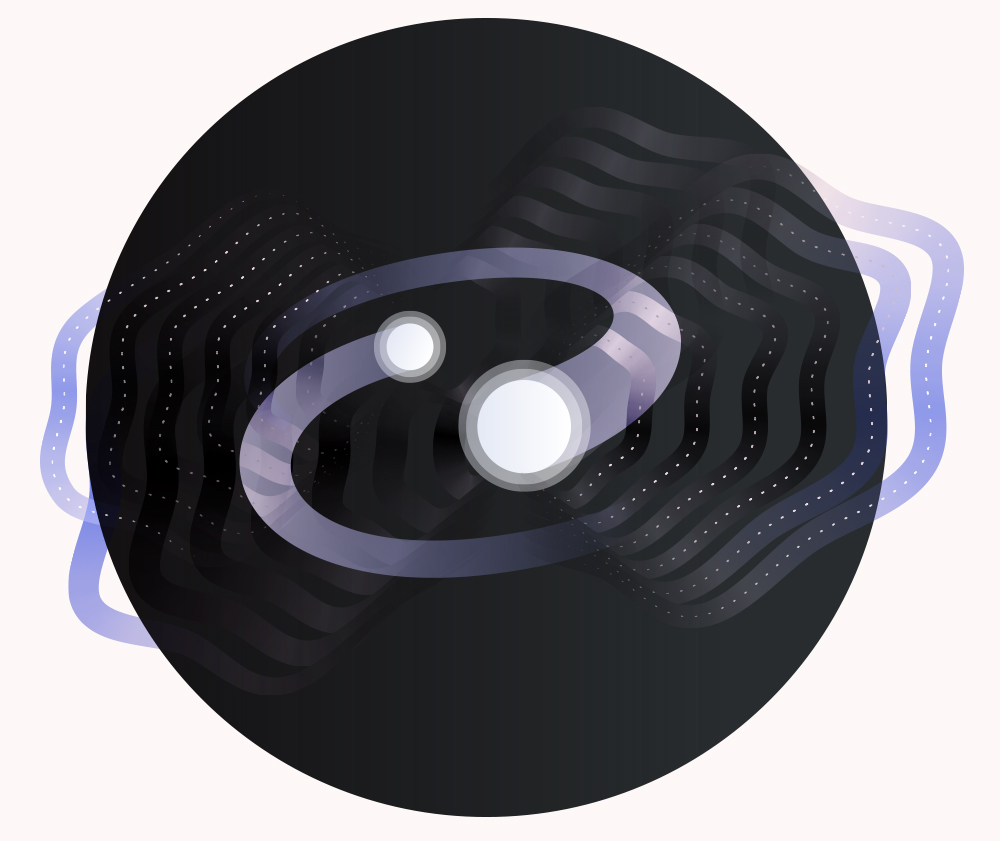

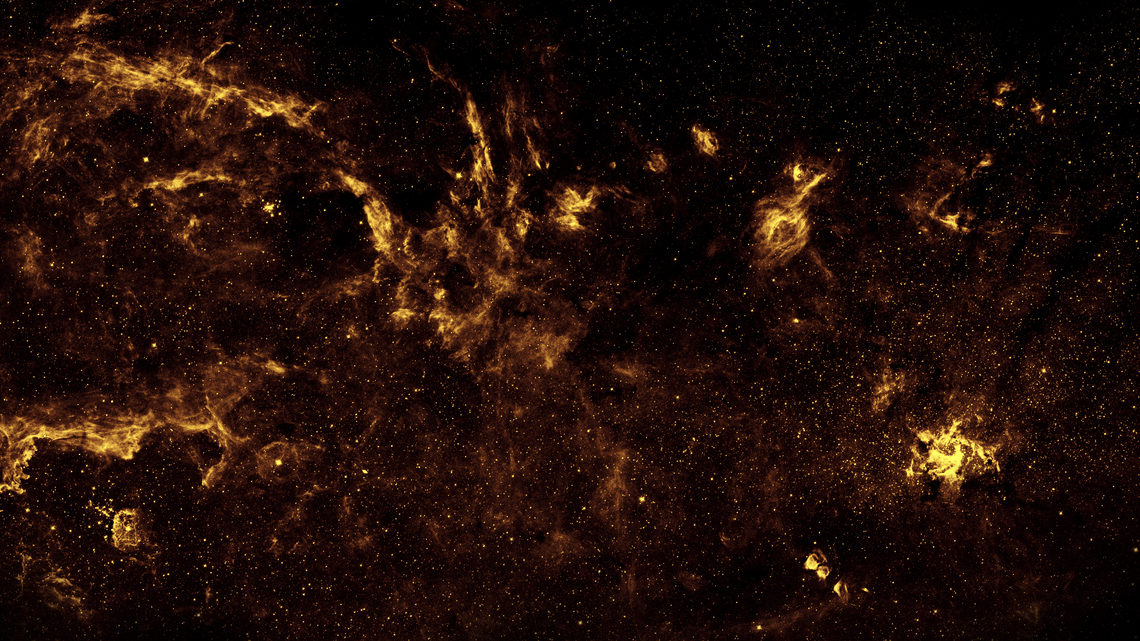

But from an outside perspective, our galaxy looks more like a disk, with spiral arms of stars reaching out into the universe. At the center of this disk is a small region around which the entire pinwheel of our galaxy rotates, a region packed with exotic astronomical phenomena ranging from dark matter and newborn stars to a supermassive black hole. Astronomers call this region of the Milky Way the galactic center.

It’s a strange neighborhood, and scientists have reason to believe it’s one of the best places to hunt for dark matter.

Phenomena in our galaxy’s heart

In the ’70s, scientists hypothesized that a supermassive black hole might be lurking in the center of the Milky Way. Black holes are points of space-time where gravity is so strong that not even light can escape.

After decades of trying to indirectly identify the mysterious object in the galactic center by tracing the orbits of stars and gas, astronomers were finally able to calculate its mass in 2008. It weighed more than 4 million times as much as the sun, making it a prime supermassive black hole candidate.

About 10 percent of all new star formation in the galaxy occurs in the galactic center. This is strange because local conditions produce an extreme environment in which it should be difficult for stars to form.

Scientists believe that at least some of the new stars being formed should explode and transform into pulsars, but they aren’t seeing any. Pulsars emit a regular pulsating signal, like a lighthouse. One early explanation for the apparent lack of pulsars in the galactic center was that the magnetic fields there could be bending their radio waves on their way to us, hiding their pulsating signals. But recently scientists measured the strength of the fields and realized the bending was much less than they had anticipated. The mystery of the missing pulsars remains unsolved.

The galactic center also has a notably high concentration of cosmic rays, high-energy charged particles that hurtle through outer space. Scientists still don’t understand where these particles come from or how they reach such intense energies.

Hunting for dark matter

We know that the Milky Way is rotating because when we look along it, we see some stars moving towards us and some stars moving away. But the speed at which our galaxy rotates is faster than it should be for the amount of matter we can see.

This leads scientists to believe that there is matter located in the center of our galaxy that we cannot see. Despite all of the other stuff going on there, this makes the inner galaxy the perfect hunting ground for this “dark matter,” an invisible substance that makes up most of the matter in the universe.

Scientists looking for dark matter take advantage of the fact that it likely interacts with itself. Researchers predict that when dark matter particles run into each other, they annihilate. They believe that this might produce a distinctive spectrum of gamma rays.

Over the past few years, scientists have detected an excess of gamma rays from the Milky Way’s galactic center. Many scientists believe that this could be a very strong signal for dark matter. The events look the way they would expect dark matter to look, and the energy spectrum and the way the gamma rays are concentrated resemble what scientists would expect from dark matter.

Other scientists believe that it is pulsars, not dark matter, that create this signal. Because the excess appears clumped, instead of smooth, scientists believe that it could be coming from compact sources like an ancient population of pulsars.

To determine whether this excess is a dark matter signal, scientists are looking for similar signatures elsewhere in the universe, in places like dwarf galaxies. These small galaxies are cleaner places to look for dark matter with a lot less going on, but the trade-off is that they do not produce as much gamma radiation.

Peering into the galactic center

The galactic center is clouded from our view by about 25,000 light-years of dust and gas, making it difficult to observe in visible light. Scientists have taken to studying different wavelengths, ranging from radio to gamma ray, to tackle the rich landscape of the galactic center. Among the instruments looking at the galactic center, there are a few that see in very different wavelengths.

The W.M. Keck Observatory, a two-telescope observatory near the summit of a dormant volcano in Hawaii, studies the galactic center in the infrared. The Chandra X-ray Observatory, a space observatory launched in 1999, observes the galactic center in X-rays.

Imaging Atmospheric Cherenkov Telescopes are ground-based detectors that scientists use to study gamma rays from the air showers they create when they smash into our atmosphere. The High Energy Stereoscopic System, or HESS, is the world’s largest Cherenkov telescope array and is located in Namibia.

The Fermi Gamma-ray Space Telescope is another observatory scientists use to investigate the galactic center. This is a satellite-based telescope that maps the whole sky at gamma-ray wavelengths. Since its launch in 2008, Fermi has been an important tool in probing the contents of the inner galaxy, from dark matter to pulsars to black holes.

The longer these instruments collect data, the closer we get to figuring out dark matter and untangling the mess of marvels at the heart of our galaxy.

The next big LHC upgrade? Software.

Compatible and sustainable software could revolutionize high-energy physics research.

The World Wide Web may have been invented at CERN, but it was raised and cultivated abroad. Now a group of Large Hadron Collider physicists are looking outside academia to solve one of the biggest challenges in physics—creating a software framework that is sophisticated, sustainable and more compatible with rest of the world.

“The software we used to build the LHC and perform our analyses is 20 years old,” says Peter Elmer, a physicist at Princeton University. “Technology evolves, so we have to ask, does our software still make sense today? Will it still do what we need 20 or 30 years from now?”

Elmer is part of a new initiative funded by the National Science Foundation called the DIANA/HEP project, or Data Intensive ANAlysis for High Energy Physics. The DIANA project has one main goal: improve high-energy physics software by incorporating best practices and algorithms from other disciplines.

“We want to discourage physics from re-inventing the wheel,” says Kyle Cranmer, a physicist at New York University and co-founder of the DIANA project. “There has been an explosion of high-quality scientific software in recent years. We want to start incorporating the best products into our research so that we can perform better science more efficiently.”

DIANA is the first project explicitly funded to work on sustainable software, but not alone in the endeavor to improve the way high energy physicists perform their analyses. In 2010 physicist Noel Dawe started the rootpy project, a community-driven initiative to improve the interface between ROOT and Python.

“ROOT is the central tool that every physicist in my field uses,” says Dawe, who was a graduate student at Simon Fraser University when he started rootpy and is currently a fellow at the University of Melbourne. “It does quite a bit, but sometimes the best tool for the job is something else. I started rootpy as a side project when I was a graduate student because I wanted to find ways to interface ROOT code with other tools.”

Physicists began developing ROOT in the 1990s in the computing language C++. This software has evolved a lot since then, but has slowly become outdated, cumbersome and difficult to interface with new scientific tools written in languages such as Python or Julia. C++ has also evolved over the course of the last twenty years, but physicists must maintain a level of backward compatibility in order to preserve some of their older code.

“It’s in a bubble,” says Gilles Louppe, a machine learning expert working on the DIANA project. “It’s hard to get in and it’s hard to get out. It’s isolated from the rest of the world.”

Before coming to CERN, Louppe was a core developer of the machine learning platform scikit-learn, an open source library of versatile data mining and data analysis tools. He is now a postdoctoral researcher at New York University and working closely with physicists to improve the interoperability between common LHC software products and the scientific python ecosystem. Improved interoperability will make it easier for physicists to benefit from global advancements in machine learning and data analysis.

“Software and technology are changing so fast,” Cranmer says. “We can reap the rewards of industry and everything the world is coming up with.”

One trend that is spreading rapidly in the data science community is the computational notebook: a hybrid of analysis code, plots and narrative text. Project Jupyter is developing the technology that enables these notebooks. Two developers from the Jupyter team recently visited CERN to work with the ROOT team and further develop the ROOT version, ROOTbook.

“ROOTbooks represent a confluence of two communities and two technologies,” says Cranmer.

Physics patterns

To perform tasks such as identifying and tagging particles, physicists use machine learning. They essentially train their LHC software to identify certain patterns in the data by feeding it thousands of simulations. According to Elmer, this task is like one big “needle in a haystack” problem.

“Imagine the book Where’s Waldo. But instead of just looking for one Waldo in one picture, there are many different kinds of Waldos and 100,000 pictures every second that need to be analyzed.”

But what if these programs could learn to recognize patterns on their own with only minimal guidance? One small step outside the LHC is a thriving multi-billion dollar industry doing just that.

“When I take a picture with my iPhone, it instantly interprets the thousands of pixels to identify people’s faces,” Elmer says. Companies like Facebook and Google are also incorporating more and more machine learning techniques to identify and catalogue information so that it is instantly accessible anywhere in the world.

Organizations such as Google, Facebook and Russia’s Yandex are releasing more and more tools as open source. Scientists in other disciplines, such as astronomy, are incorporating these tools into the way they do science. Cranmer hopes that high-energy physics will move to a model that makes it easier to take advantage of these new offerings as well.

“New software can expand the reach of what we can do at the LHC,” Cranmer says. “The potential is hard to guess.”

Why are particle accelerators so large?

CERN physicist Edda Gschwendtner explains why we need big machines to study tiny particles.

The Large Hadron Collider at CERN is a whopping 27 kilometers in circumference. Edda Gschwendtner, physicist and project leader for CERN's plasma wakefield acceleration experiment (AWAKE), explains why scientists use such huge machines.

We can only see so much with the naked eye. To see things that are smaller, we use a microscope, and to see things that are further away, we use a telescope. The more powerful the tool, the more we can see.

Particle accelerators are tools that allow us probe both the fundamental components of nature and the evolution and origin of all matter in the visible (and maybe even the invisible?) universe. The more powerful the accelerator, the further we can see into the infinitely small and the infinitely large.

You can think about particle accelerators like a racetrack for particles. Racecars don’t start out going 200 miles per hour—they must gradually accelerate over time on either a large circular racetrack or a long, straight road.

In physics, these two types of “tracks” are circular accelerators and linear accelerators.

Particles in circular accelerators gradually gain energy as they race through an accelerating structure at a certain position in the ring. For instance, the protons in the LHC make 11,000 laps every second for 20 minutes before they reach their collision energy. During their journey, magnets guide the particles around the bends in the accelerator and keep them on course.

But just like a car on a curvy mountain road, the particles’ energy is limited by the curves in the accelerators. If the turns are too tight or the magnets are too weak, the particles will eventually fly off course.

Linear accelerators don’t have this problem, but they face an equally challenging aspect: particles in linear accelerators only have the length of the track where they pass through accelerating structures to reach their desired energy. Once they reach the end, that’s it.

So if we want to look deeper into matter and further back toward the start of the universe, we have to go higher in energy, which means we need more powerful tools.

One option is to build larger accelerators—linear accelerators hundreds of miles long or giant circular accelerators with long, mellow turns.

We can also invest in our technology. We can develop accelerating structure techniques to rapidly and effectively accelerate particles in linear accelerators over a short distance. We can also design and build incredibly strong magnets—stronger than anything that exists today—that can bend ultra-high energy particles around the turns in circular accelerators.

Realistically, the future tools we use to look into the infinitely small and infinitely large will involve a combination of technological advancement and large-scale engineering to bring us closer to understanding the unknown.

Have a burning question about particle physics? Let us know via email or Twitter (using the hashtag #AskSymmetry). We might answer you in a future video!

Bump Watch 2016

A bump in the LHC data has physicists electrified…but what does it mean?

In December, the ATLAS and CMS experiments presented a sneak peek of the new data collected during the first few months of the Large Hadron Collider’s enormously energetic second run. Both experiments reported a small excess of photon pairs with a combined mass around 750 GeV. This small excess could be the first hint of a new massive particle that spits out two photons as it decays, or it might be a coincidental fluctuation that will disappear with more information.

Now, physicists are presenting their latest analyses at the Moriond conference in La Thuile, Italy, including a full investigation of this mysterious bump. After carefully checking, cross-checking and rechecking the data, both experiments have come to the same conclusion—the bump is still there.

“We’ve re-calibrated our data and made several improvements to our analyses,” says Livia Soffi, a postdoc at Cornell University. “These are the best, most refined results we have. But we’re still working with the same amount of data we collected in 2015. At this point, only more data could make a significant difference in our ongoing research.”

LHC physicists wield a myriad of powerful tools to investigate the mysteries of the universe: a 17-mile-long particle accelerator; huge and intricate particle detectors; a worldwide network of computing centers. But from all these resources, there’s one tool that can make or break any potential discovery—statistics.

In 2015, LHC scientists recorded data from 20 trillion proton-proton collisions. A few tens of thousands of these collisions simultaneously produced a high-energy and clean pair of photons. Around 1200 of these photon pairs have a combined energy of 125 GeV (scientists now know that Higgs Bosons spat-out about 100 of them. The other 1100 were produced by normal and well known processes.) Moving towards higher energies, the spectrum starts to fluctuate more and more as there are fewer and fewer pairs recorded. At around 750 GeV, scientists observed only a few dozen photon pairs, and a handful more than predicted.

But whether this extra handful is evidence of a new particle or just another normal statistical fluctuation is essentially a coin toss.

“In physics we sometimes see excesses due to statistical fluctuations as we go up to higher and higher energies,” says Massimiliano Bellomo, a postdoc at the University of Massachusetts, Amherst. “We’re currently at the very edge of our sensitivity and cannot confirm or exclude any of the bumps we’re watching until we have much more data.”

On its own, one small bump means nothing. But excitement builds when independent experiments start to see the same bump popping up over and over again.

“I saw this fluctuation while doing my PhD thesis with CMS data from Run 1 and didn’t think anything of it,” Soffi says. “Now CMS and ATLAS have both seen it again in the new data. This could easily be a coincidence. But if it keeps showing up, then we might have something.”

For their new analysis, the CMS experiment incorporated about 20 percent more data—data that was recorded during Run 2 when the CMS magnet was turned off. They also recalculated the energies of particles recorded by the detector using more refined calibrations. After integrating these two improvements into their analysis, CMS is still seeing the bump; and it’s slightly more pronounced than before.

ATLAS scientists are also delving deeper into this mystery. This week they re-examined data collected from the first run of the LHC to see if this recalcitrant bump would make yet another appearance. Scientists performed two independent searches, each employing a slightly different method to classify and separate the photon pairs. In one analysis, scientists again saw a small excess of photons pairs at 750 GeV. But in the other analysis, they saw nothing out of the ordinary. A further investigation of the 13 TeV data from 2015 shows that the bump is still there, but still not significant.

“The bottom line is that we can’t say anything definitive until we have more data,” says Beate Heinemann, a researcher at the US Department of Energy’s Berkeley Laboratory and deputy spokesperson for the ATLAS experiment. “Therefore we are now focusing on getting ready to record and analyze the large amount of data that the LHC will deliver this year.”

Even with the limited amount of data, physicists are already looking ahead and speculating what this little bump could be if it grows over time. A popular and emerging theory is that it could be the first glimpse of a heavier cousin of the Higgs boson.

“We have discovered one Higgs boson with a mass of 125 GeV,” says Andrei Gritsan, a professor of physics at Johns Hopkins University. “We are trying to understand this boson deeper, but at the same time we are looking for other possible Higgs bosons with higher masses. We are excited about the excess at 750 GeV in one decay channel, but we need to establish if this signal is real and if it appears anywhere else before we can say something about it. All we can do today is hypothesize and speculate.”

If this bump is early evidence of a new boson, theorists predict that it will transform into a wide assortments of particles—not just two photons. For instance, a heavier cousin of the Higgs boson would likely behave like the known 125 GeV Higgs boson, which spits out a pair of Z bosons, a pair of W bosons, or a pair of photons when it decays.

Experimentalists recently grouped Z boson and W boson pairs with approximately the same energy and compared the number of pairs-per-group with the predicted totals, which were generated by thousands of computer simulations.

“Evidence of more pairs than predicted materializing yet again at 750 GeV would be exciting,” says Jim Olsen, a professor of physics at Princeton University. “And then the question would be if and how all the observations are related."

But after performing these analyses, scientists are reporting that they haven’t observed anything out of the ordinary in any other channels. Scientists are also mapping the energies of Z bosons paired with a photon, a channel which theorists predict could be a goldmine for new physical phenomena. But the first results also show no anomalies.

Physicists are at the very beginning of LHC Run 2 and have collected about one-tenth as much data as they did during Run 1. The new data comes from collisions that are 1.6 times more energetic than Run 1 collisions and opens up a new energy regime that was not previously accessible. But physicists need time for the data to accumulate.

“We’re expecting to get a factor of 30 times more data over the course of the next three years, which will allow us to probe this higher mass range better,” says Bellomo. “We will resume collecting data again in later spring and should be able to say a lot more about this bump and others searches by the end of the summer.”

Dusting for the fingerprint of inflation with BICEP3

A new experiment at the South Pole picks up where BICEP2 left off.

When researchers with the BICEP2 experiment announced they had seen the first strong evidence for cosmic inflation, it was front-page news around the world. Inflation is the extremely rapid expansion of space-time during its first split second of existence, proposed to explain a number of puzzling properties of the universe, making the BICEP2 results a really big deal. Over the following months, though, the excitement evaporated: After combining their data with other experiments, the BICEP2 team showed that most or all of the signal attributed to inflation was likely produced by galactic dust inside the Milky Way.

But traces of inflation could still be hiding in the data, and that’s why scientists haven’t given up yet. BICEP3, the upgraded version of BICEP2, began collecting data yesterday. The first observations using the fully updated equipment will run through November.

The updated equipment involves many more detectors and ultimately many more pixels. Even more importantly, BICEP3 and its partner experiment, the Keck Array, cover a wider spectrum of light in hopes of teasing out the hidden signal of inflation from the choking dust around it.

“The thought was to put together something that's at least 10 times as powerful as BICEP2 in a single instrument,” says Zeeshan Ahmed, a Panofsky Fellow at the Department of Energy's SLAC National Accelerator Laboratory. Ahmed oversaw the design and installation of BICEP3 and now coordinates the operation of the BICEP3/Keck Array assembly at the South Pole. And what are BICEP3’s chances of avoiding the problems its predecessor experiment faced?

“We’re optimistic,” Ahmed says.

State of the universe

The BICEP (once an acronym, but now a stand-alone name) project is one of many observatories designed to measure the properties of light left over from the first 380,000 years of history after the big bang. That light is the cosmic microwave background (CMB), and it serves as a state-of-the-universe report from a time before there were any stars or galaxies.

In particular, if inflation happened immediately after the big bang, it would have produced turbulence in the structure of space-time itself—gravitational waves like the kind LIGO detected recently. While these waves would be too weak for LIGO to see, they would twist the orientation of the light, which is known as polarization. (Glare from wet pavement is also polarized light, which is why many sunglasses involve polarizing filters.)

“We already know that the gravitational waves exist. LIGO is more about studying the black holes than the gravitational waves. Likewise, the BICEP program is more about studying the beginning of the universe than trying to see the gravitational waves themselves,” says Chao-Lin Kuo, associate professor of physics at Stanford University and SLAC and principal investigator of BICEP3.

The amount of polarization from gravitational waves is very small, and its nature makes it hard to measure. However, polarization can still tell us a lot about the initial conditions of the cosmos, says Licia Verde of the University of Barcelona. Verde is a member of the Cosmic Origins Explorer (COrE) project, a proposed space-based mission from the European Space Agency to measure CMB polarization.

“That would be a way —possibly the only way—to ‘see’ all the way to the birth of the universe,” she says.

Other CMB observatories such as the Planck or WMAP satellites can measure polarization over narrow fields of view, but BICEP is designed with a wide-field view to look where the inflation signal might be dominant. The BICEP2 iteration of the project found a surprisingly strong polarization signal, but subsequent analysis of Planck data showed that galactic dust, made of large molecules not terribly different from the type underneath our beds, also polarizes light and can mimic gravitational wave effects.

“Almost all if not all of the BICEP2 signal can be attributed to dust,” Ahmed says. But not all is lost.

“It turns out the more [wavelengths] you have, the better you can disentangle the CMB signal from the dust signal.”

Flexing the BICEP

BICEP is a moderately sized telescope as these things go, about 8 feet tall and 2.5 feet in diameter, weighing in around three-quarters of a ton. The newest version of the camera has 20 tiles made of 64 pixels each, 1280 pixels total compared BICEP2’s 256. While that’s a lot fewer than you’d find in an ordinary digital camera, these pixels record microwave light. Those wavelengths are longer than visible light and need larger pixels.

BICEP3’s pixels are also tuned to a different wavelength of microwave light than that used by BICEP2.

Meanwhile, the Keck Array is effectively five copies of BICEP2 mounted together in one telescope and operating at two complementary wavelengths. Together, these instruments should be able to characterize the dust obscuring any possible signal from the CMB.

Walt Ogburn is a Stanford physicist who works on BICEP3 operations at the South Pole during the Antarctic summer. He says the process of getting BICEP3 and the Keck Array running “has succeeded beyond expectations.”

Verde (who is not involved with the project) notes that the whole experiment is “a high-risk, high-gain pursuit”: There is a possibility that there is nothing to see at all if inflation didn’t happen or if the polarization from gravitational waves is too small.

On the other hand, observations by Planck and the Keck Array offer a glimmer of hope that BICEP3 will succeed if there’s anything to see. The type of dust in the Milky Way that BICEP2 saw appears to be simple, meaning that it polarizes light in some wavelengths more than others in a predictable way. The polarization from gravitational waves could peek out in wavelengths where the dust is less pronounced. Only by scanning across a range of wavelengths can researchers find the exact behavior of galactic dust across the microwave spectrum, in hopes of subtracting it away. Once that is done properly, what’s left may be gravitational waves from inflation.